Validity: The fifth V of Big Data

The Four V's of Big Data have been an industry standard since their introduction, but the increasing concerns about privacy recently have led to the need of a fifth V: Validity.

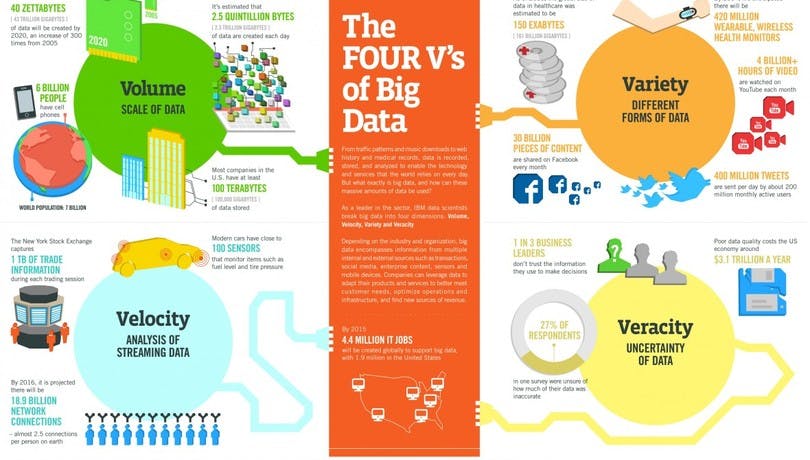

Back in 2011, IBM coined the four Vs of Big Data as a means of qualifying and quantifying the most important factors in the increasingly large datasets their data scientists were working on. Most likely all of us agree that the 4 pillars of Volume, Variety, Velocity, and Veracity remain just as applicable today as they were 7 years ago.

Numerous predictions were made by IBM at the time, mostly about increasing scale of network connections, the number of sensors, the amount of data created, and even the volume of wearable devices in-market. Whilst these predictions are still pertinent, today’s data landscape is looking very different from yesterday’s, for another reason that IBM had not considered at the time.

Four V's of big data according to IBM

Today there’s a new fifth V of Big Data - Validity.

Validity is coming to the fore because of increased consumer and regulatory scrutiny and is different to veracity in nuanced, but important ways. Veracity never considered the rising tide of data privacy and was focused on the accuracy and truth of data. By this I mean things like whether the source could be trusted, or if it was associated with the right IDs or people.

Validity on the other hand brings legality to the table, and, in the context of GDPR, data provenance and transparency, the legal basis associated with its processing and whether it is inside the appropriate retention period for such data.

Volume, Variety, Velocity, Veracity, and Validity

Volume is the one V likely to be most challenged in this new, privacy-first paradigm. Data owners are already purging legacy information and data they hold on consumers where its validity is coming into question. There are discussions about potential for a “lean data revolution” where instead of focusing on scale, businesses will focus on collecting only the data they need and using new regulations to get their houses in order.

In a post-GDPR world, particularly as more countries begin to enact their own data privacy laws to keep pace with personal data creation, we can expect validity to become the number one consideration ahead of all others. After all what good is a huge, up-to-date, highly-accurate customer data set if all it achieves is a court hearing? If you want to learn more about how a CDP can help you meet GDPR compliance, check out our GDPR guide here or head to our GDPR resource hub.

Latest from mParticle

Try out mParticle

See how leading multi-channel consumer brands solve E2E customer data challenges with a real-time customer data platform.

Startups can now receive up to one year of complimentary access to mParticle. Receive access