Should you be buying or building your data pipelines?

With demand for data increasing across the business, data engineers are inundated with requests for new data pipelines. With few cycles to spare, engineers are often forced to decide between implementing third-party solutions and building custom pipelines in-house. This article discusses when it makes sense to buy, and when it makes sense to build.

Access to high-quality data is critical for product, marketing, customer service, and leadership teams across the business.

Not always adept at data acquisition themselves, these stakeholders often rely on data engineering to enable them with the data they need to make strategic decisions.

As demand for data increases, data engineers need to prioritize requests and invest their cycles wisely. This article will walk through what data engineers should consider when deciding whether to implement a third-party vendor or build a pipeline internally for any given use case.

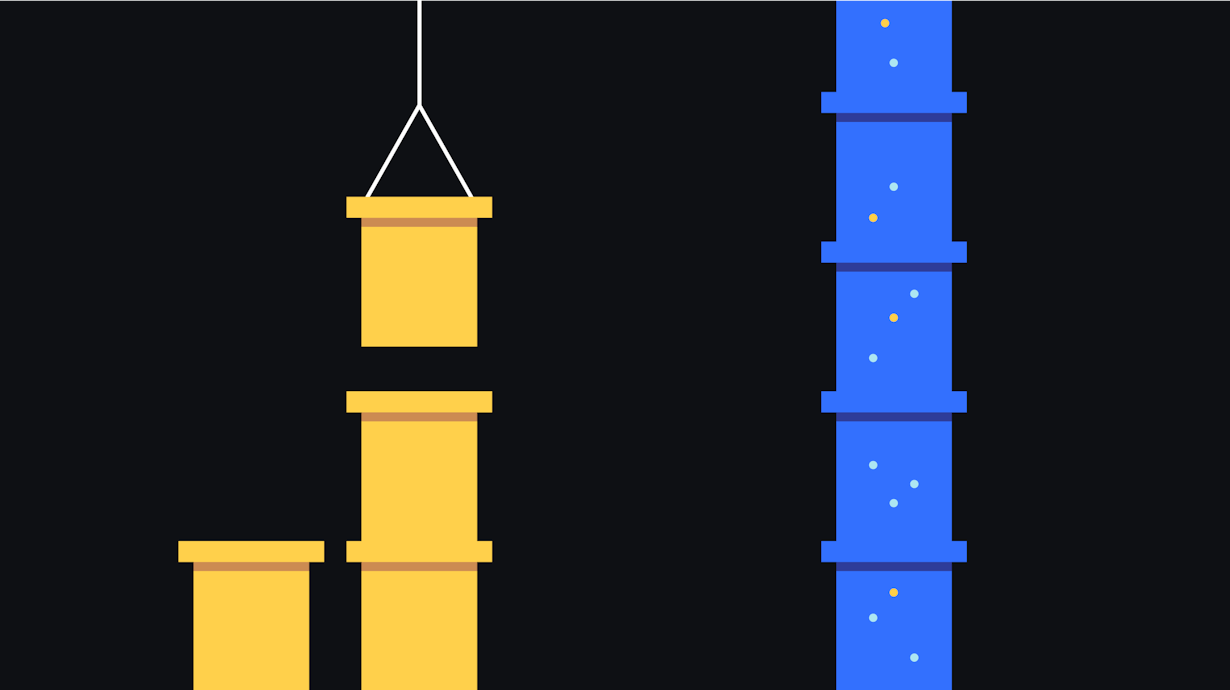

What are data pipelines?

Data pipelines are a series of processes that move and transform data from various sources to destinations where it can be activated for value, or stored for retrieval at a later time. Pipelines can range in complexity based on factors such as how many transformation and validation steps are involved, the cadence at which data is moved, and the number of sources data is being collected from. As more departments across the organization are leveraging data to make strategic decisions, having stable, scalable data pipelines in place is critical to business success.

Data engineers have emerged as the key role that owns the implementing, monitoring, and maintenance of data pipelines. Data engineers work closely with data consumers, such as marketers, product managers, data analysts, and business leaders, to understand each department’s data needs. These needs are often organized in a data plan, which details the size, shape, and purpose of the data desired across the business. With a plan in place, data engineers focus on providing data pipelines that meet teams’ needs at scale, and also on ensuring the validity and timeliness of the data delivered. That process includes testing, alerting, and creating contingency plans for when something goes wrong.

When reliable data pipelines aren’t provided, data consumers are at risk of:

- Acting on stale and/or inaccurate data

- Getting confused between multiple sources of truth

- Spending their time searching for the data they need instead of turning data into business value

Should you be buying or building your data pipelines?

Implementing data pipelines is not a trivial task. Most organizations have dozens, if not hundreds, of data sources feeding their analytics systems. Furthermore, product and marketing teams are beginning to require data to be loaded in real time so that they can power timely use cases such as transactional messaging and personalized advertisements.

Due to the cycles required to build new data pipelines, data engineering teams often consider whether to buy or build the pipeline required for a given use case.

There are several commercial solutions that make common data ingestion, transformation, and loading possible without writing extensive code. Many come with built-in scheduling and job orchestration, two of the more labor-intensive aspects of building data pipelines.

Some of the benefits of implementing a commercial data pipeline are:

- Time-to-value

It’s faster to deploy a commercial solution than it is to build one internally. In situations where data consumers need access to new data quickly, commercial solutions allow you to enable the business without having to dedicate dozens (if not hundreds) of development hours. Additionally, the time you get back can be spent on other projects, such as expansion of critical systems or development of customer-facing features. - Accessibility for non-technical users

It’s not uncommon for the requirements of a data pipeline to change over time as data consumers demand new sets to power new use cases. For a data pipeline built in-house, these changes require data engineers to revisit the pipeline and expand or modify it. Commercial pipelines, on the other hand, often offer a web-based interface that allows data consumers to make changes to what data is being collected, how it is being transformed, and where it is being sent without engineering support. This grants business teams more data independence and allows data engineering to refocus their time. - Packaged integrations

In addition to loading data into a data warehouse and/or data lake, many commercial data pipelines allow you to collect and connect data to common data systems such as Salesforce, Google Analytics, Braze, Amplitude, and more. This saves the time otherwise required to build and maintain integrations with these third-party systems. Many commercial pipelines will support things like job execution timeouts, duplicate data handling, source system schema changes, and more. If data consumers need to get data from or to a new third-party system in the future, data can be forwarded by simply connecting a new integration.

On the other hand, there are some trade-offs to consider:

- Pricing scalability

When deciding whether to build or buy, it’s important to consider pricing over time. Commercial data pipelines often price based on volume, though differ on how they measure volume and how pricing is tiered. If deploying a commercial solution, it’s important to partner with a vendor that provides pricing that will scale with you. - Customization requires coding

Buying a pipeline off the shelf is just that. It will not be as custom to your existing architecture as something built internally. Many vendors will allow you to customize your pipelines to a degree, although this will require custom development within an environment that the vendor supports (such as AWS Lambda, Azure Function, or Google Cloud Functions). If you have a number of custom data sources, such as custom-built REST APIs, it’s worth considering how much work will be required to support these sources. - Security and data privacy

Though commercial data pipelines often act as a data processor, as they only support the means used to process the data and don’t own or define the purpose of data, it’s important to evaluate the security and data privacy risks of third-party vendors. This evaluation will be based on your organization’s risk tolerance, regulatory requirements, and potential liability. You may also want to consider the overhead of reviewing and approving a new data processor who might also add international data flows to your current set-up.

Deciding whether to build or buy a data pipeline is a complex decision, unique to each organization and use case. In reality, most data engineering teams will use a mix of in-house built pipelines and third-party vendors throughout their infrastructure. For example, it may be more cost-effective to build a pipeline to handle high-volume ingestion from your company’s iOS app, but to use a third-party vendor to ingest data from your OTT platform.

As James Densmore notes in O’Reilly’s Data Pipelines Pocket Reference, if you do choose a mix of custom and commercial tools it’s important to keep in mind how you standardize things such as logging, alerting, and dependency management, as a lack of standardization across systems can lead to poor data quality.