What the heck is reverse ETL?

Reverse ETL is a process in which data is delivered from a data warehouse to the business applications where non-technical teams can put it to use. By piping data from a data warehouse to downstream business systems, reverse ETL tools fill the gap between data storage and activation.

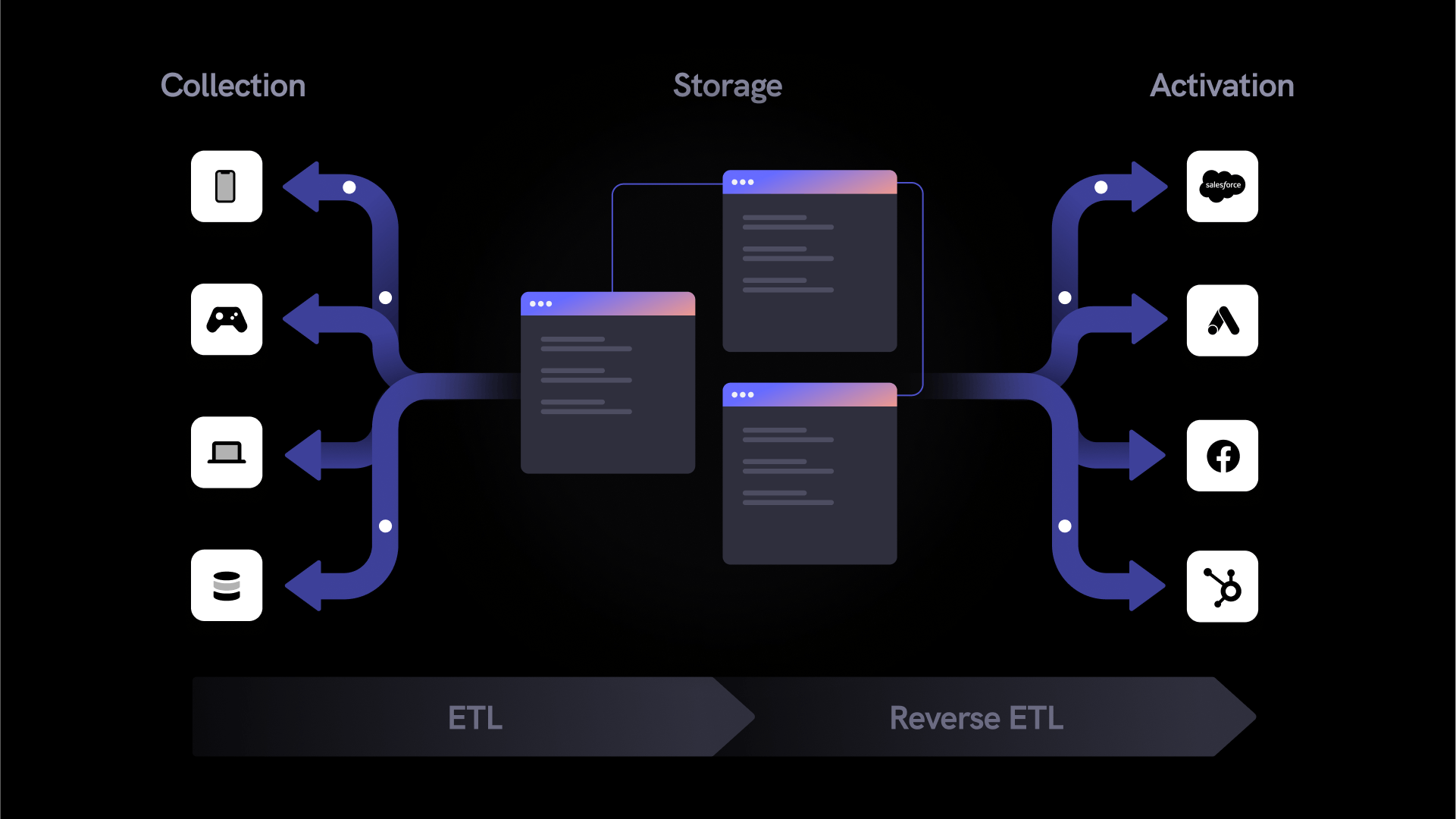

The modern data landscape is densely populated with various tools and systems that collect, store, analyze, monitor, and transfer data. Businesses have a plethora of options for building out their data stack, and several factors––like business goals, internal resources, and existing infrastructure––can influence how data teams choose between these options. But one basic truth from the earliest days of enterprise data still holds: leveraging data in any capacity requires handling separate but interrelated lifecycle phases: collection, transformation, storage, and activation. Or more simply, it requires the “pulling” of data (from collection to storage), and “pushing” of it (from storage activation).

First things first (before the reverse)

With this basic understanding of the data lifecycle in mind, we can begin to break down one of the buzziest concepts in the world of data today: Reverse ETL. For context, let’s begin by breaking down what happens to data at each step in the ETL (or Extract, Transform, Load) process.

Extract

You can’t eat a fish before you catch it. Before you can leverage data in any capacity, you need to collect or “extract” it from places where it is produced or already stored. Most data strategies incorporate data from a wide variety of sources, including but not limited to owned applications and websites, CRM platforms, and legacy systems. Extracting data from these locations is the first step towards conducting business intelligence or analysis, and providing stakeholders like marketing and product teams with the data they rely on.

Transform

When data is extracted from a source, it is typically in a raw state and not yet suitable for analysis or activation. At the collection phase, data may include duplicate, inaccurate, or outdated records, and it won’t conform to a centralized data model that matches incoming data from other locations. This is where data transformation comes in. At this stage, data is cleansed, deduplicated, verified, and organized so that it adheres to a consistent structure and meets standards of data integrity. This step aims to ensure that when the data is loaded into a central repository and used for analysis, it will produce accurate and reliable results.

Load

Once data has been collected and transformed, it’s time to deliver it into a system of record where it can be combined with other data, analyzed, and delivered to downstream tools (we’ll get to that part later). This is the purpose of the “load” step. Often, the system into which the cleaned and organized data is loaded is a data warehouse, like BigQuery, Amazon Redshift, or Snowflake.

The three processes that comprise ETL happen in the “pulling” half of the data lifecycle. ETL accomplishes the task of gathering data from disparate sources, organizing it into a cohesive structure, and delivering it into a central repository for storage and analysis. Once the ETL process is complete and data is housed in the data warehouse, the data is useful in a number of ways. For example, data scientists and analysts can analyze historical data to predict future trends, or produce dashboards for internal teams using BI tools like Looker and Tableau.

Data stored in a data warehouse has limited utility to semi- and non-technical stakeholders like marketing and product teams, however. For these teams to take action, data must be pushed to the “the last mile” of the data stack––that is, delivered to systems like engagement platforms and analytics tools where business stakeholders can put it to use. This is the step in the data lifecycle that Reverse ETL tools are built to fill.

What is Reverse ETL?

Reverse ETL describes a data processing workflow in which data is extracted from a centralized repository, transformed into a structure that meets the ingestion requirements of specific systems, then loaded into these systems in order to be activated or analyzed. This should sound familiar, since these are the same steps touched on above. The only difference in Reverse ETL is that these processes occur in the “pushing” rather than the “pulling” half of the data lifecycle.

In Reverse ETL, the extraction process entails querying and copying desired data from the data warehouse. Once copied, this data is transformed into a state that is compatible with the API specifications of a target downstream system. Finally, it is loaded into these tools, where end users can activate it. By handling these processes, Reverse ETL tools provide companies with a way to sync data from their data warehouse to downstream systems, and provide marketing, product, sales, and other business teams with a way to utilize data from the data warehouse.

What are the benefits of Reverse ETL?

By acting as a bridge that takes data from the data warehouse to the tools where business teams can put that data to use, reverse-ETL solutions can unlock a variety of use cases and create efficiencies for both business and data teams.

Activation of data in the data warehouse

A data warehouse is a great tool for storing vast quantities of data over a long period of time and enabling data engineers and data scientists to query and analyze this information. But without a way to pipe this data to the tools that matter in day-to-day customer engagement, the data warehouse is of little value to non-technical internal teams like marketing, sales, and customer support. Reverse ETL tools have the ability to make copies of the data in the data warehouse, transform it to meet the specifications of external APIs, and deliver it into tools like Salesforce, Hubspot, and Google Ads. Bridging this gap helps make data in the data warehouse accessible to non-technical teams, thereby increasing its value.

Increased data engineering efficiency

Even without a Reverse ETL tool, data in the data warehouse can be made available to non-technical teams. However, in the absence of a pipe that connects the data warehouse to downstream systems, data engineers would have to take on a lot of manual work to enable marketing teams to leverage the data they need in the form of building and maintaining each of these API connections individually. This could quickly amount to an unsustainable amount of engineering overhead, and significantly add to the time it takes for business teams to access the data they need when they need it.

Data access for non-technical teams

Reverse ETL tools increase the speed at which marketing, sales, and other business teams can access and operationalize the data living in the data warehouse. Without the pre-built API connections and downstream tools, data engineers would have to manually query, cleanse, and transform datasets for end users whenever new use cases arise. Reverse ETL solutions enable business teams to forward pre-build data models to downstream tools without engineering support.

Caveat emptor: Important considerations regarding Reverse ETL

For many organizations, the data warehouse serves as a great system of record. Making the rich insights in the data warehouse available to business teams enables marketing, product, and growth stakeholders to gain a more complete understanding of the customer journey and deliver more effective personalization.

Reverse ETL solutions provide significant value by relieving data teams from much of the work required to make the warehouse data accessible to business teams. It must be noted, however, that most Reverse ETL solutions are not built to support the complex data requirements of modern marketing organizations.

- Reverse ETL solutions currently aren't build to stream data to destinations in real time, prohibiting business teams from deliver the real-time personalization that so many consumers now expect.

- Data connections are configured on a one-to-one basis, required organizations to perform repetitive manual work to map data connections across their tech stack.

- Audience building requires SQL, prohibiting non-technical stakeholders from creating segments without support from the data team

- Automations related to identity resolution, data quality validation, and data governance, critical to empowering business teams to activate data at speed and scale, are not supported by Reverse ETL solutions and therefore must be executed in the data warehouse, increasing total cost of ownership.

Teams considering unlocking the data in their data warehouse with Reverse ETL may consider a real-time Customer Data Platform with Reverse ETL capabilities. By combining the simplicity of Reverse ETL with the power of a real-time CDP, organizations can relieve data teams from ad hoc data wrangling while also empowering business teams to enhance their real-time personalization programs with insights from the data warehouse.

To learn more, you can check out mParticle Warehouse Sync here.