How Reverb optimized their data workflows at scale and gave users the rockstar treatment

With mParticle at the heart of their data stack, the world’s largest online music marketplace said goodbye to burdensome ETL pipelines, slashed their data maintenance workload, and unlocked new opportunities to build data-driven features into their product.

In the early 2010s, there was a void in the music eCommerce landscape bigger than the dark side of the moon, a chasm wider than Neil Peart’s drum set. Music retail websites of the time were fragmented and cumbersome, and they failed to meet the needs of an ever-evolving music community. What the space truly lacked was an online platform built for musicians by musicians––a place where anyone in the world could easily and affordably buy and sell their gear.

In 2013, Reverb set out to change this. In the eight years since its launch, Reverb has become the largest online marketplace dedicated to buying and selling musical instruments. This full-fledged digital community enables brick-and-mortar retailers, individual hobbyists, and rock stars alike to buy, sell, and bond over gear. The site’s listings range from electric, acoustic, and bass guitars to accessories, pro audio gear, synthesizers, drums, orchestra instruments and much more. In addition to its eCommerce functionality, Reverb’s industry news, product reviews, gear demos, and artist interviews are among the best and most insightful music content online. In 2019, Reverb was acquired by Etsy, the global marketplace for unique and creative goods, providing the music retailer even more resources with which to grow and improve their community.

In this story, we’ll see how Reverb’s engineering team addressed various challenges related to collecting, unifying, and activating their customer data throughout the company's development, and explore the ways in which company’s developers leverage mParticle to ease the burdens of data management as well as enable new and exciting use cases for their data.

Mo’ users mo’ data problems

As Reverb began serving more users across web and mobile touchpoints, the company soon faced the challenge of meeting a diverse set of customer needs. Every user’s on-platform experience needed to speak to what brought them to Reverb in the first place, whether that customer is a studio engineer purchasing industry-standard recording equipment, or an hobbyist seller looking to make $100 off a dusty guitar in their closet. For a peer-to-peer marketplace like Reverb, growth comes not only in form of users, but as inventory that continuously expands in volume and variety, adding even more complexity to the challenge of delivering personalization.

Reverb’s customer touchpoints include its desktop and mobile web experiences, as well as iOS and Android apps that deliver more granular control over various buying and selling features to power users. Between purchasers, sellers, and casual browsers, Reverb has ample opportunity to leverage data on how users engage with their site and apps in order to improve the shopping experience. Making sense of this cross-channel information and transforming it into formats and structures where it can be used within various engagement tools, however, is easier said than done.

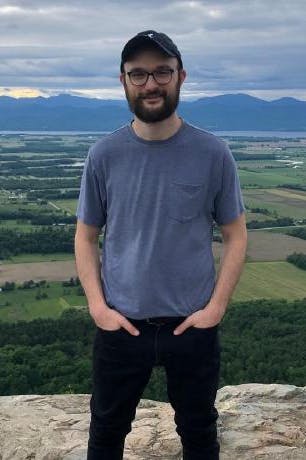

Reverb’s Director of Engineering Geoff Massanek characterizes this goal as delivering “multiple products within the same product”––that is, using customer data to give each user a unique set of interactions with the brand, from web and mobile browsing to off-site messaging. Accomplishing this goal presents a slew of complex engineering challenges, however, and this is ultimately what led Reverb to place mParticle at the heart of their data stack.

A five-year veteran of Reverb’s Engineering team, Massanek is the driving force behind the company’s data strategy. He presides over the data ecosystem that allows this massive music marketplace to thrive, overseeing the MarTech, Data Engineering, and On-site Advertising teams. MarTech handles SEO, external digital advertising, and customer-facing communications like email and push, among other things. Data Engineering is responsible for functions including data warehousing, building internal data pipelines, running database transformations and aggregations, and training and maintaining machine learning models. Finally, the On-site Advertising team builds Reverb’s advertising service, which allows users and external retailers to reach the platform’s visitors with targeted display ads.

Streamline your website integrations

Learn which third-party integrations you could replace with a single SDK by adopting mParticle.

[Engineering] time keeps on slippin’… slippin’… slippin’

When it comes to their data tooling, the Engineering team at Reverb likes to strike the right balance between building bespoke solutions and buying the right vendor systems. To lay the foundation required to deliver personalized experiences, Reverb’s engineers (especially the developers on their data team) found themselves spending quite a bit of time building and maintaining heavy ETL (Extract, Transform, and Load) pipelines to ingest customer data and federate it out to internal and external destinations.

Any engineer who has worked on writing an ETL pipeline to deliver real-time behavioral data to downstream tools––especially for the purpose of delivering an in-session tailored experience ––knows this is a major challenge. From identifying the users to loading their data profile, to serving meaningful data to an end client system, real-time systems can require a complex system of aggregations, caches, APIs, and more.

Before adopting mParticle, the pipeline that transported Reverb’s user event data to their internal warehouse also entailed leveraging a complex chain of systems. “We had an API that would receive internal events, then to get them from our backend into a warehouse we ended up putting the events through a complicated pipeline,” Massanek said.

While these self-built data pipelines did enable a better, more tailored, user experience, Reverb’s Product and Marketing teams soon found themselves requiring more flexibility. As their strategy evolved, the growth teams increasingly looked to custom audiences as a way to tailor an individual’s onsite experience to their intentions and preferences at any given moment.

With the proverbial "build vs. buy" seesaw beginning to favor homespun solutions to a slightly impractical extent, Reverb began the search for the right data partner. When Geoff and his colleagues encountered mParticle––and in particular the Audiences solution––they immediately saw value in what this dynamic audience building capability could potentially deliver. Letting mParticle handle the work of automatically updating pre-defined audience segments was a massive relief for Reverb’s engineers, as it eliminated the need to build pipelines for manual querying and uploading. This product was one of the earliest mParticle features that Reverb adopted, and played a key role in their decision to place mParticle at the heart of their data infrastructure.

“Having a ‘way to do things’ ends up really saving time in terms of discovery, learning, ramping up and patterns,” Massanek says. Not having to build and maintain “big, heavy ETL pipelines” to move data across systems and create audiences “freed up our analytics team to do more forward-thinking analysis rather than just rote querying of systems,” he added.

I can see clearly now

By integrating mParticle’s SDKs and APIs across their customer touchpoints, Reverb also realized a major benefit in terms of data quality and consistency. “Before mParticle, we had a lot of code in our backend written to federate out data coming in from various sources,” Massanek said. “If someone purchased something, for example, this code would send that federated data to various sources, each of which required a slightly different shape.”

After adopting mParticle, Reverb was able to eliminate all of that data federation code, along with the maintenance work that goes along with it. This provided the team with a clearer and more comprehensive picture of customer behavior.

“We’re now recording every page view and honing in on landings,” Massanek says. “This lets us really understand where our traffic is coming from, where sessions are beginning, and how those add up to a purchase. We used to use third-party reporting that would report on last-touch attributions, and with our mParticle event data we ended up making a backward-looking model that really helped us understand the customer journey.”

Enabling new tools and use cases

With mParticle acting as a central hub in their data infrastructure, Massanek and his team decided to take advantage of their newfound data advantages by adopting additional tools in their stack. One of these partners was Amplitude, which has since become Reverb’s go-to platform for understanding how their product features impact customer journeys. When they previously relied on internally-built ETL pipelines to understand their users and audiences, the insights Reverb's growth teams derived were limited by the fragmented nature of this information. With their event data flowing into mParticle, it was easy to forward granular, canonical, and organized first-party data to Amplitude, where it could then be used to produce richer and more actionable product insights.

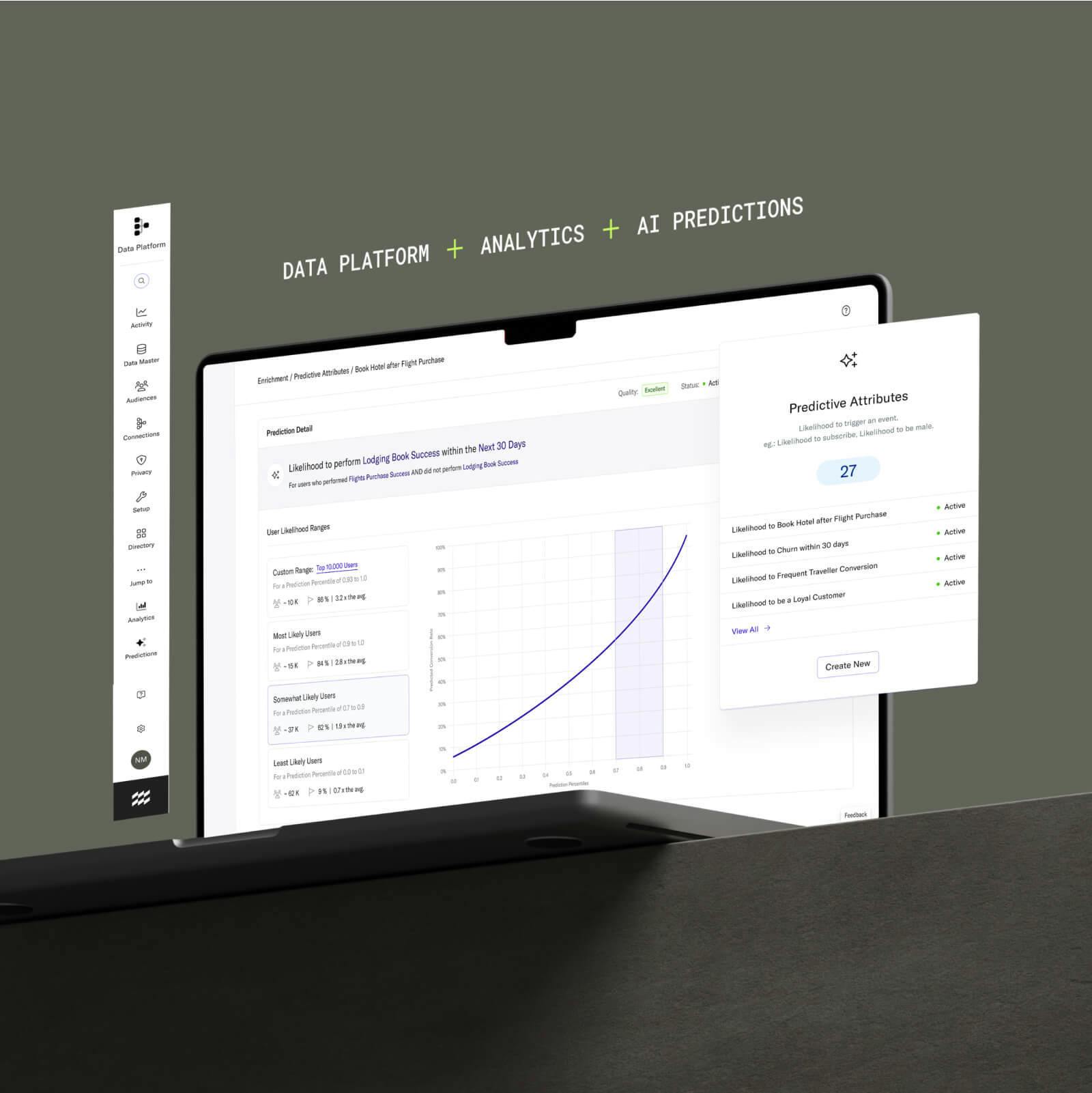

Lately, Reverb’s engineers have also been leveraging the mParticle Profile API in conjunction with internal predictive code on their backend as a way to drive both in-app and offline personalization. Developers at Reverb have built scoring algorithms they refer to as “affinities”––jobs that ingest a user’s onsite behavioral data and generate a list of additional items in which that user would likely be interested. These “affinities” are then tied to that individual’s user profile in mParticle, at which point the Profile API can surface them in the form of recommendations the next time that user visits the site.

“We’ve been using these affinities as a way to personalize and segment customer emails for a while,” Massanek says. “I’ve been pushing this vision that our email and offsite marketing should be reflected on our site, and with its data federation abilities combined with the Profile API, mParticle is a really nice way to connect those dots and make those experiences more seamless.”

Looking to the future

One frontier that the Engineering team is particularly excited about is the possibilities that Machine Learning-powered recommendation has to offer. Massanek and his colleagues see mParticle tools like the Profile API as a path to pulling this level of one-to-one personalization into their products.

“If we can tell that you’re on the fence about what to buy, maybe we can give you the info you need to decide, or even give you an incentive, we’d like to,” Massanek says. “Through mParticle and some of the other partners we have plugged in there, I think we’re on the cusp of really being able to bring those experiences to fruition. “We’re just starting in that direction,” Massanek says, “but I think that will be a really big next chapter for us.”

Massanek and his team are also looking forward to getting even more value out of audience building, and they are continuing to experiment with different segmentation strategies. “I think figuring out what level of granularity, how specific or generic the audiences should be, and the best way to slice and dice them to get fresh messaging to the right groups of people is still an area we are exploring,” Massanek says.

Conclusion

In total, Reverb’s engineers have saved countless developer hours by no longer having to build and maintain internal data pipelines. Without needing to enlist technical support to onboard new systems, the company’s growth teams have been able to adopt five new key tools into their stack––all of which developers will not have to maintain.

Interested in learning more about mParticle and how we can save your Engineering team from integration maintenance, and unlock new and powerful use cases for your customer data? Try out our platform demo here.

Learn more about mParticle’s SDKs, APIs and features like the Platform API in our documentation.