Beyond the Hype: Getting started on your Artificial Intelligence (AI) for marketing journey webinar recording

Hear Adam Biehler, VP of BD & Partnerships from mParticle, and Bilal Mahmood, CEO of ClearBrain, as they discuss how to get your data ready for predictive personalization.

Alex Maguire: Hello everyone and welcome to our webinar about artificial intelligence for a better marketing journey. Thank you so much for joining. With me are Bilal Mahmood, the CEO of ClearBrain, and Adam Biehler, mParticle's VP of BD & Partnerships. Just a quick housekeeping item. If you have any issues with the audio or visual, please feel free to chat us at any point. Also if you have any questions, please feel free to enter those as well. We will have Q&A at the end and we will answer whatever we have time for. And lastly, we will follow up with the recording of this in case there's anything you missed and want to make sure to follow up on. And with that, I will pass it off to you, Adam!

Adam Biehler: Thank you very much, Alex. Bilal, would you like to just do a quick, brief introduction of yourself and then we can get started here?

Artificial Intelligence (AI) for a better marketing journey.

Bilal Mahmood: Absolutely! Hi everyone. I'm Bilal, cofounder and CEO of ClearBrain. I'm really excited to be here. Briefly about ClearBrain...Our mission is very in line with the topic of this webinar in enabling every marketer to leverage AI to make better decisions. And this desire stems from my background. Previously, I was head of data science at Optimizely, where we deployed teams of engineers and data scientists, telemarketing teams to predict things like conversion and churn. I actually connected then with my cofounder, Eric [Pollmann], who was the first SRE on Google's ad team and would scale predictive recommendations tenfold to millions of dollars a day. We basically put our heads together and saw the opportunity to democratize what we'd built — internally at Google and Optimizely with other team members from Uber and Draft Kings — so that any company could leverage what we built internally out of the box, and predictively target users with personalized experiences using artificial intelligence. And so we're excited today to chat with Adam and mParticle about a joint solution where any company can leverage these things together too, out of the box.

Adam: Awesome. Thank you very much. And as Alex mentioned, I lead up partnerships and business development here at mParticle. I'm really excited to have the opportunity to have the ClearBrain team and Bilal on the call here. AI is a topic that is very intriguing in the market right now. It's kind of one of the buzzwords that we hear and we want to make sure that this, this presentation actually gets a little bit more granular. But before we kind of get into the how of AI, we want to talk a little bit more of the why. And we're not talking about Skynet here...We're talking about how this helps or why this is necessary for marketers to be paying attention to it now. And it's actually relevant in your everyday life, today.

The digital experience has changed

So with that, we're going to jump in, and everybody on this call is well aware that the digital experience has changed. But the implications of this, as consumers have moved from buying in store, to buying just through web, to buying or engaging brands across multiple devices, has created many variabilities and variations that have had implications in terms of how we think about what we're doing is businesses, and how we're serving those customers.

The job of marketers has evolved

And with that, the job of the marketer has evolved. So when maybe the web era was, was born here and companies were just starting to put eComm online, a lot of the focus from a marketing standpoint was really just on acquiring new users and driving some of that initial purchase. That evolution for marketers is now the entire customer journey. So it's about acquiring and keeping those customers throughout the entire lifecycle of what the customer is and the terms that what a marketer is today actually in the roles that are part of the marketing organization have changed to evolve with that as well.

Conversion throughout the customer journey

So it really is about delivering a great experience across the customer journey. It's not about just focusing in a silo of how do we get more customers on board? But the challenge is there are really stemming from what this notion of customer experience is and, and what does a great experience actually mean. So I wanna dive into that for a second.

What great CX means

So when we talk about what great CX means, it's really built based on the concept of consumer expectations and how that overlaps with what the real experience that customer actually has. And consumer expectations is really hard to define, right? Because everybody as an individual has a different set of expectations. But for a second, I want to just kind of take you through an exercise putting yourself in the shoes of a buyer, as a consumer.

"Perception is reality" for the average customer

So, you know, everybody's heard the term perception is reality. And maybe the folks on this call, you know, we, we tend to live in the tech industry. We understand what's going on behind the scenes, but for the average customer, that's even more relevant. And so the perception that they have, of what an expectation for digital experience is, are set by the brands that they engage with on a daily basis. The Netflix of the world, the Airbnbs, the Amazons, the Spotifys, the Ubers. These companies are essentially setting the bar for what our customers, for what all of our customers expect in terms of how they engage with a brand.

Trained to expect "the BMW experience"

And those experiences that they deliver are really what I like to call the BMW experience. They are highly personal, they are very predictive in terms of what a customer wants to be doing. They're real-time, so they're immediate. They're seamless — independent of where you're engaging those brands. And they're highly automated, driven by machines. So the amount of employees that these companies have to actually serve those experiences, is far less than what you would find in a traditional organization to deliver that sort of customer satisfaction and customer experience.

Most brands aren't equipped to keep up

So you end up with this world where you have our customers, they expect this BMW experience because daily they are engaging these brands. And the traditional enterprise that is competing with these brands, have a number of headwind forces that make it very, very difficult for them to actually deliver on what that experience needs to be.

And some of those headwinds forces — just to call them out — are the explosion of devices that people can actually engage with a brand across. And you know, we look at voice, with some of the new innovations around voice, that's a brand new channel that has new platforms. It has new data that can be collected.

Regulatory change is a massive, massive market mover. GDPR — that I'm sure we're all aware of — has recently come into effect. That has created a significant shake up in terms of how brands are able to think about reaching and using data with their customers.

The proliferation of cloud technology is making it very easy for — well, relatively — the barriers are smaller for companies to build new solutions that can solve a very specific problem in the marketing journey. And marketers, we all want to use those tools to help drive growth, and we don't actually have to go through IT anymore. We can go use a credit card, and we can swipe it, and we can be up and running in 10 minutes with a lot of tools. Which is great for the immediate term outcomes, but in terms of understanding what your customers is doing, you're just creating another silo. So cloud proliferation is continuing to make this challenge even more prevalent.

And then the scarcity of developers is really becoming a challenge for these traditional enterprises because where a lot of the data and assets that they want to use live are in traditional systems that really aren't built for today's web generation. So you need to find those sorts of a key skillsets and talent to be able to actually really unlock what that opens up for you.

And then just the massive scale of data. So these businesses may have their own data centers and they may not have adopted cloud yet. They should be, and I expect they are, but they may not be able to support the scale of data and take advantage of what that potentially offers.

A gap forms

So you get this world where those headwinds forces are creating a gap in between what the expectations are and what the actual experience a brand — that is coming from kind of that legacy world — is able to deliver on. And so that the brand experience a customer has is difficult depending on what channel.

So it may not be built for a mobile app, so the resolution may look funky. You may not even see the add to cart button. It's time consuming, so you have to log in on multiple platforms because we don't necessarily know who the user is across these platforms. In a lot of cases it's insecure. If they're not built for a native platform, it may be a little bit of a hack to work around.

And sometimes it's late, right? So if I'm getting an offer for a discount for something, you know, a week later because it wasn't real-time served up, what's the point? And then I've seen scenarios where it's just dead wrong. You're assuming that I'm a person that I am not, based on other data that, who knows where you got it from.

So you get this world where those businesses that are set up in the digital era — like the Netflix of the world — are continuing to innovate and the expectations are getting higher and higher from the customer. But the experience that these businesses can deliver, are our weighed back by these headwinds and this gap continues to grow.

So how do you close that gap?

So how do you close the gap? And that's really why we think this is an important topic. Because one of the key things that we are seeing within those companies is that AI — and letting machines help drive some of what these forces are causing — can be a really key influencer in closing that gap and essentially maximizing what the overlap of the expectations and the experience of your customer is.

Make those forces a "growth tailwind"

And, ultimately, making sure that that actual (and expected) user experience is on par with what those newer businesses are delivering. And so your business can actually match up to what that BMW experience is. And so by unlocking AI — and by unlocking you know, the machines, and the data, and those forces — Instead of those being a headwind, they become a growth tailwind for you.

And that's really why this topic is so important and we're not saying you have to re-architect your business. You can do, you could do a lot of what we are talking about today immediately, and that's really what we want you to walk away from here with. So please ask questions around it.

But you know, once you've done this, you really can let your current customers inform you how and where to find your next customer. So instead of having to hire 10 new people — you should be hiring, don't get me wrong — but you can now use automation to drive where and when to find new customers. You can let your customers engage on all of these screens and be sure that you have the right experience, it seamless.

And this is done at scale because machines are doing the work and it's entirely automated. Your business can be agile and iterative. So as new changes come down stream, you can still use your data and you can still match up to whatever those new expectations are and your engineers can focus on delivering on the core problems that your business has.

Leverage AI to maximize the overlap

And doing that with mParticle and ClearBrain, is really kind of cored in what we think are these kind of four basic stages to get going right now with AI. So we're going to dig in a little bit on these topics, but you know, that's kind of the 'why' of why we think this is really important for you to be paying attention. And in today's market ecosystem and digital ecosystem, it's not a future state. This is a now state. Your customers' expectations are at that level right now and every day that you're not actually trying to catch up, you're probably going in the opposite direction.

Step 1: Implementation — the data foundation

So we really think that the kind of core of this — and this is for from an mParticle lens — but I think anybody who has kind of played in the data space understands that the implementation is the foundational layer that really will set the groundwork for how successful you are with anything downstream. That may be a personalization initiative, that may be something as simple as sending an email.

From our lens, implementation really starts with understanding what are the different components of your business and the business KPIs that you want to be optimizing around. We tend to group everything into kind of five key buckets from a data standpoint, looking at eCommerce data — and we have a number of customers in the eCommerce space, so we tend to create a tier one hierarchy around eCommerce from our perspective. But digging in there, what are the attributes that are associated to a product?

So you have to collect all the relevant data that you want to be optimizing around from an eCommerce standpoint. Lifecycle events — whether it's an open up an app, whether it's a closing an app, or whether it's in the background. And then really what is the user doing? What are their behaviors? What are the IDs associated to that user and the attributes that, you know, are firm data points associated with that user.

Every business is different, right? So you need the flexibility to extend a data model to be able to collect all the different custom events that are relevant to what drives conversion in your business but may not be applicable to other businesses. And sometimes those are the competitive differentiation points that will allow you to really optimize where you're driving growth. And then kind of the standard screen information.

Collecting and unifying the right data

So for us it's really about making sure you've thought through all of these points and understanding how those points should be coming together from all the different places a customer interacts and collecting that, and unifying that data in a standard way, so that independent of where it's being generated, where those touchpoints are generating data from your customer:

- A native ad in iOS-level;

- Or within a cloud service that your company uses to make sure that you're providing support on a case that's been filed very rapidly;

- Or maybe it's sitting in a back-end data warehouse where you want to load it up from a server side API;

- Or maybe it's just in a spreadsheet.

No matter where it is, you need to be able to map all of that data to a standard data model and kind of create this canonical format for essentially what your customer is and what they are doing. So who is your customer? Identifying them and what are the behaviors that they're taking across the different places that they would engage your brand.

Know where the data needs to go

And so once you've done that, you now have this foundation, but as you're doing it, you need to be thinking about, okay, well where am I, today, going to want to get that data to? And in what format? And then, tomorrow, where am I going to want to get that data to? Well, you just have to understand conceptually, maybe you're in the early stages of a business and you're just trying to gain adoption, so you want to just be sending that data to do an advertising platform, that's fine, but you're eventually going to want to run email marketing. You're gonna eventually want to have a case management system.

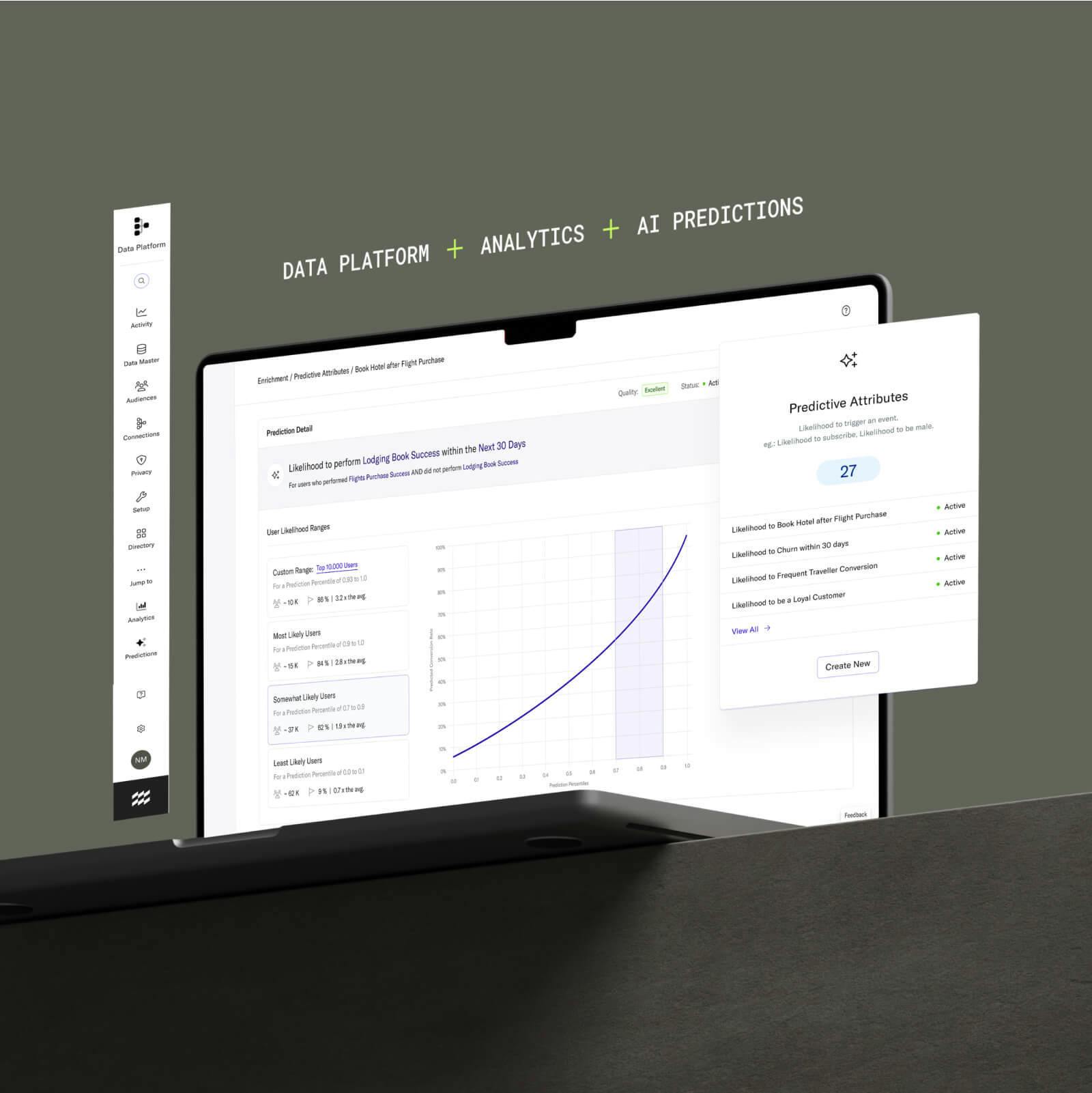

Well now you've though kind of that data foundation. You have this kind of, this baseline that you can build from, and you can get your data there really rapidly. So once customers have this, they now have the ability to spend data within their analytics platforms, but then they can also immediately start taking advantage of it from a predictive and recommendation lens. And that's really where we start touching on what ClearBrain can bring to the table here and how we work with a machine learning vendors.

So with that, I'm going to start handing off to you, Bilal, and you can take it from there.

Stage 2: Predictive segmentation

Bilal: Thanks, Adam, and thanks for walking through the foundation of what's necessary to enable machine learning automation. So taking a step back again, kind of the way both ClearBrain and mParticle view of the world is that there's four stages of predictive personalization. Where the high-level objective is that you want to be as good as Netflix, Amazon, and Uber at personalizing user experiences to an individual person to have the right message, at the right time, at the right place.

And again, what's blocking most companies from doing this stems from these four stages of what's missing, which is the machine learning automation component. These companies are able to map intent or interest by content, product, or price, and recommend specific context and push recommendations and drive incremental revenue by targeting the right person.

Like Adam just walked through — the first stage was to enable this kind of machine learning based automation is using statistics to understand past behavior to recommend future actions — you need to actually instrument your data in the right format. So once you've used a tool like mParticle, you'll have instrumented the entire user journey from first touch to conversion and have developed all of the digital signals that a machine learning model can actually understand.

The second stage that then becomes important is predictive segmentation. So once you've instrumented all this data in the right foundation, you have the user journey mapped in a way that a machine learning model could have the base of signals to then leverage. And so the goal of predictive personalization is to optimize for a conversion event, say add to cart in this example.

So with traditional based segmentation, what you would do if you didn't have machine learning is you would pick a couple of attributes, or events, to define the segment of users that you would add to cart. For instance, you would say something like, I want to retarget people with an add to cart message — with like say a banana versus an apple — based on maybe that they did some other things that related to apples, or they were in a specific region, or they were referred to a specific type. But it requires your own human intuition, which is often pretty good, but it's limited in terms of the number of parameters that you can use to define that audience.

And then so instead, when you look at this from a predictive lens, what if you could instead flip this logic on its head? And instead of segmenting by two, three, four, five attributes to define your add to cart message, you could instead build that audience based on hundreds — if not thousands — of signals. And instead, segment by every potential behavior — where every potential attribute that you instrumented in tools like mParticle and then leverage out of the box — to instead be targeting based on one attribute, which is just a user's likelihood to add to cart.

So the problem with that is that a human can't go through and develop the signals and the weights for every right permutation of every attribute, and every behavior. And oftentimes those behaviors and attributes, their relative weighting to each other towards the goal of add to cart is going to change. It changes every day, every week, by cohort, by region, by location. So how you can do all those permutations? And that's where a machine learning model comes in. So if you use a tool like ClearBrain, what we essentially do is take the data — that you instrumented in step one that Adam outlined with mParticle — and we will automatically ingest and transform the data into a machine readable format.

Machine learning models really quickly and will only understand things in building with continuous variables. So this enables an automated platform that can obfuscate a lot of that complexity for the marketer, so that you just have a point and click interface where you point and click to define the conversion event, say add to cart. The model will automatically then look in the past who added to cart and who didn't, and compare their attributes and behaviors and weigh them appropriately.

And so this way you can build prioritized list of users by their probability to actually do the action that you're trying to incentivize a user to do, rather than necessarily using heuristics or guesses to actually build the right kind of mix of attributes or behaviors based on the past. And so that's kind of the second step.

Step 3: Customized recommendations

The third step then becomes, once you have a stage three, once you have this predictive segment, you essentially now have a list of users and their propensity to actually do an action. You have a list of users and essentially a score — could be number of between one and 100, in ClearBrain we give it a number between zero and one — for the add to cart action you're trying to incentivize. But simply knowing what a user is going to do doesn't actually help them to that action and they're just sitting in a CSV and a list. So what do you actually do to kind of move them over the hump?

And so in kind of this predictive personalization pipeline — in these four stages that we're walking through — there's really kind of three buckets of actions that you can take once you have a predicted segment of users. And so the way we outline it is that you can either:

- Recommend them to be in an inclusion or exclusion group as part of an audience;

- You can recommend different content;

- And you can recommend different pricing.

So I'll walk through each of these and what these kind of mean in a little more detail.

Recommend them to be in an inclusion or exclusion group as part of an audience

The first is, if you have a predict instead of users — to say you know, which users are predicted to have an affinity to potentially kind of buy a product in a given week — you could then add / define which users to actually include in your ad group or your facebook custom audience and which ones to exclude.

And so say you're optimizing for incrementality of ad spend, you should probably exclude people who have a really high probability to convert because your ad dollars are being wasted. They're going to convert with or without your ad. And similarly for the low probability people, you'll get people who are clicking on ads but don't actually ever convert hypothetically. So you should probably exclude them as well. And so just include the people who are the middle of probability that you can actually influence their behavior and you actually can maximize the ROI and the reduced the CPA and the capacity.

Recommend different content

The second thing that you can do is kind of recommending different content. So back to the add to cart example...If you have done a prediction for which users are most likely to add to cart an apple versus a banana, you can now recommend different content in your emails, or your ads, or even onsite personalization. When a user has a high probability to be interested in an apple versus a banana, instead of generically just kind of showing them the entire product catalog, you can actually show them the product that you think they are recommended buy based on their historical profile that the model has recommended.

This is essentially what Amazon and all these other eCommerce companies do at scale, or specifically within the right time. So someone might be interested in an apple at 5:00 but they're not interested at 7:00. And so how can you know that that intent based on behavior? That's where a machine learning model can be able to recommend that action you can take.

Recommend different pricing

The last is actually like price elasticity. So if you knew a user's probability or affinity for any specific product, you could adjust the pricing that you're actually doing to market to those users. So for instance, if you have a discount — or an offer, or a coupon — that you're giving, normally what you do is offer a discount to everyone. Because you don't have a way of knowing their affinity for the product, so you give a 20 percent discount to everyone.

The problem with that — similar to an ad bidding issue — is that for the highest probability people, you're kind of wasting money. You're wasting potential revenue because these people have a 90 percent probability or higher to actually buy your product. They're going to do it with or without your discount or coupon anyway. So you probably shouldn't be giving them a discount. And so again, with a machine learning based approach or predictive segmentation approach, if you knew the probability of a user to actually convert or buy, you can develop proportional discounts relative to the actual probability to convert and then give a segmented by user kind of price or discount to actually maximize the supply and demand curve in that capacity.

And that's the three ways that you can actually personalize an experience using a predictive based segmentation approach.

Stage 4: Predictive personalization

Then the last stage is then again, if you have this kind of predicting...you have instrumented your data, you have the foundation, you converted it into personalized statistically based audiences that are based on the intent of a user to actually convert. And then stage three, you've actually then figured out what to actually personalize in your app.

You still need to activate on those users and deliver that experience. That's where also again, mParticle comes back in, is with that combination of ClearBrain and mParticle you develop these recommendations and these kinds of personalizations. And then you actually sync them into the platform that you want to use.

So with the recommendations around inclusion versus exclusion groups, you can then personalize your ad audiences based on who to include or exclude in the ads. Or if you want to recommend different content, you could integrate or sync these ClearBrain scores directly into a tool like Optimizely for personalization to have an attribute to define which users to show specific experience and not.

Or back into mParticle for an API integration that you can then personalize the onsite experiences by recommending different content — or different discounts or coupons — directly within the site or into email platforms directly to trigger based in the capacity. This allows you to end-to-end essentially have that vision that Adam was outlining before...An end-to-end automated experience that is personalized to the individual user level — rather than a historical base audience level — around personalizing experiences. This is based on what they actually want or need and at what time — rather than a guess of what you think they might need — to actually deliver the right experience, to the right user, at the right time.

And that kind of closes the four stages of predictive personalization and how tools like ClearBrain and mParticle combine to give you the foundation to automate this entire process in minutes rather than having to spend months, if not years, on building this in house, automatically. So that's kind of the holistic journey in that capacity.

Joint mParticle + ClearBrain workflow

Adam: Awesome. Thank you very much. So, you know, I think we want to get into some questions here as they're coming in. But is there anything else you want to comment on before we jump in, Bilal?

Bilal: I think one thing is that there are some trends that Adam kind of hinted on earlier, in terms of the complexity and the changing market of the data ecosystem. I think one of the things in a post-GDPR ecosystem that's going to be really important is that we're now operating in a world where advertising, based on third-party data enrichment, is getting a little bit more uncomfortable. Or it's not really great in the current political climate.

And so one really promising benefit about machine learning and predictive personalization is that it essentially enables you to leverage your first-party data in a new and exciting way. You're essentially extracting new audience segments, new attributes, and new properties about your users to develop more first-party data from first-party data. And know how to target based on intent and actually what the customer possibly wants, rather than having to guess or use data brokers or enrich data from other tools (that do weird things but somehow they actually gather data).

This enables you to, from a statistical standpoint, derive signals or input from the data that you've already collected. I think at the foundation of having mParticle be a first-party data collection tool, to then leveraging ClearBrain on top of that, is a really exciting opportunity in this current climate to enable you to activate on more of the data that you've already collected.

Adam: Yeah. That is 100 percent what we're seeing as well on our side, especially as people are responding to some of these regulatory changes.

Q&A

Awesome. So I'm going to jump in here with some of these questions. So, one question...

If you were giving advice to someone wanting to undertake a machine learning journey, what's the low hanging fruit you would recommend they start optimizing around?

Bilal: Yeah, sure. For every company I think is going to be a little bit different, but I think the way we like to position it is: What is the conversion event that you are trying to maximize your user journey? So the event that tends to work the best for machine learning in general is having a large sample size and have a lot of digital signals.

So every company I think can map out their user journey from first touch to conversion and there's kind of a funnel and drop-off analysis. And so I'd map out that journey and identify which is a conversion event that's the most interesting for you. And I think trying to maximize that and predict that action and then actually be able to maximize that.

Another thing that I think people don't leverage too much that I would like to see more people do is actually activating on existing users. And I think that's one benefit with machine learning, is that you can automate that approach. And I've seen a lot of companies that will focus a lot on user acquisition. They just kind of driving more and more people in the funnel. But if they're kind of going into a funnel that doesn't optimize for them, you're kind of just pushing people into a sinking ship.

And it's complex to think through all the potential signals and permutations of personalized experience post sign-up or post first acquisition. So the benefit of machine learning is...I'd actually recommend some kind of app activation or up-sell or reengagement. So having a personalized predictive approach to predicting reengagements. Have reengagement scores around people who are most likely to become inactive, who is likely to churn, who is most likely to cross-sell, or up-sell.

Build prediction or predictive audiences based on that and automate workflows around that. Automate email drip campaigns and ad retargeting campaigns to automate that experience post funnel. Because that is kind of like a tried and true, you can actually automate that and then focus your energy again on new user acquisition. This often requires more of a human touch of brand content, display, and more creative aspects. Then let the machine learning take care of the post acquisition side in that capacity.

Adam: Yeah, and I would add to that question...One of the reasons that we think that a CDP plus machine learning solution is right, is that when you're undertaking this machine learning journey and you're identifying those conversion events, you know a lot of times the marketer may not know exactly what they are. Right? That's a little bit of a journey as well. So you need flexibility in terms of how you can iterate on what events are important for your business and which ones you should be optimizing around. And I think that the foundational layer that the CDP brings — plus the point and click integration solution with a ClearBrain— allows you to evolve.

As you learn more about...Well maybe, you know, this event isn't as essential to driving people through the funnel as we thought it was...So maybe we should be optimizing actually higher up in the funnel around how we get people to just provide consent...And allow us to collect the information that will help us to deliver a more targeted experience downstream. So having the foundational layer, where everything is standardized, allows you to have flexibility with where you start. And then from there — back to kind of what Bilal was talking about — you have this ability to just optimize around what is important to your particular business.

Bilal: That's actually a really good point. One thing we've seen in customers who use ClearBrain is that the ability to have an automated predictive audience segmentation tool enables you to answer a lot of those questions a lot faster. So you can point and click, define some event that you have an idea about that you want to predict, and within minutes you get the report or the scores of all your users.

And what people often find — is the one I mentioned a little bit back on a couple of slides — is that what the machine learning model is doing is it's automatically weighing every previous behavior, or every previous attribute that a user could have, before that conversion event. And weighing and assessing its relative importance. And in the platform we actually expose each of those weights and attributes and their sensitivities kind of indicating which ones are more important, and which ones are less important.

Often times customers will find that...Oh, this conversion event...What I really should be targeting as this upstream event because oftentimes the actual conversion event has too few users actually doing it. So let's maximize for the thing that's next up the funnel, that actually you can have a higher sample size of people to actually do.

A good example for this is churn. Predicting who's going to churn isn't necessarily that helpful because when they are about to churn, you've already probably lost them. And what we find is when they used a predictive segmentation tool like ClearBrain to actually define the indicators of churn, they were able to work backwards...And say, Oh, these are the things upstream of churn that I should actually have been optimizing for...And they actually then do predictions off of those things and then optimize for those instead.

And so yeah, totally right. And I think the benefit of mParticle is that you can instrument all these things, across the entire user journey, and the model will determine the relative importance of them automatically.

Adam: And you kind of started to hit on this when you talked about activating on existing users...So as you get started, if it's really about how do we prevent churn, the behaviors that are indicating churn may be stored in kind of historical database, or maybe you have them in a bunch of silos...

Is it possible to take advantage of some of the historical data, in terms of how you think about what we might be wanting to do moving forward, when you bring on a ClearBrain?

Bilal: Yeah. Great question. So another thing — back to kind of how machine learning generally works — machine learning looks at past behavior to predict future behavior. So the more history you have, the better signals that it's able to derive on inference about what actually determines indicated behavior.

So I think one of the great features of mParticle is that you can replay your data from the beginning of instrumentation. So when you plug it into ClearBrain, it's not net new. It's not just new events you have to wait and collect. You can actually replay all your historical data and then that actually enables you to have like six months (or years) of history to kind of inform those predictive models from the get-go.

Another really important thing is often the signals that are really important for most predictive models, regardless of vertical, are often attribution. What referrer source did they come from? UTM? What browser device are they using? And so only by going to the earliest history for an individual user, if you're able to replay that, you can get those first touch points and have those automatically leveraged in the model. And so things like attribution and first touch are really important signals in any predictive, propensity-based model. And so having that historical information is really important, as well.

Adam: So one of the things we talked a little bit about was the sort of customers that can really take advantage of this. And having high scale, in terms of your user base and activity, is obviously going to be a great sample size to work off of. But what we see is that a lot of the more innovative, forward-thinking companies or individuals that want to take advantage of this tend to be in a disruptive startup. You know, where they're trying to change how businesses are built. So help us understand...

Regarding having a sample size that warrants enough data, when does it make sense to start thinking about predicting what's going to happen or machine learning or AI with that data

Bilal: Yeah, totally. So I can put this into a couple different ways...One is, there's the general thing that you need enough sample size to actually do a predictive model and to have an accurate model. What we see in ClearBrain — having done this for hundreds of companies at this point that we've on-boarded and leverage the product — is that probably a good threshold for getting an accurate model is somewhere between 25 to 50,000 users. Because if you think about when a prediction is actually doing in the end, it's basically selecting a subset of the users and identifying them as high probability. So within 25 to 50,000 users, for a typical conversion event you'd have, those tend to be a good threshold.

And the problem actually comes more so when you get into the stage three or four, where we mentioned, what do you do that information? We find that most often when you're actually doing a predicted kind of personalization experience, predicting and getting an accurate prediction is one thing. But then, you need to run an experiment to prove that it actually was effective in personalization. And so for that you usually need a larger sample size.

So again, when you're predicting off of even 25 to 50,000 users, where you're basically identifying as to maybe 500 to a thousand people who have a high probability to do that in a given week. And so if you then do a split test, and then experiment on whether those people actually converted, you have to run that experiment for at least a month. Back in my Optimizely days, we found that the minimum amount of users, or visitors, that you needed to run an experiment was about 25 to 50,000. And at 20 and 25,000 to run a statistical significance to experiment, on average. So in that capacity, if you only have a maximum of 25 and 50,000 users, you have to run that thousand user subset for a pretty long period of time. So the good threshold we usually see is like, ideally you're at some threshold of like maybe 250,000 users total. That's what we kind of see as the minimum threshold to use. It's a good threshold.

Another thing also sells by vertical. For B2B companies, we often don't work with them too much because again, they have that low sample size and they care more about account-based predictions rather than userbase predictions. And it's hard to predict things where the latency of the signal is very long. So you're often predicting a conversion which happens over three months, or if not a year, in a B2B SaaS space. So it's really hard to predict those on a typical basis. So what you're often predicting on a B2B businesses just lead scoring, the first touch, which in and of itself has very little digital signals. So it's hard to predict that in general. So my reputation for the companies that are best enabled are kind of mid-market, medium-staged companies that have a reasonable sample size of users but have a product with high digital engagement that can derive a lot of signal during that journey.

Adam: So. Great. That's super helpful. So one last question here and then we're going to wrap up. So, you know, as a personalization and AI expert...

What is the brand that you personally think it of as kind of 'the holy grail' that is really killing it? And you know, if there was one brand, who do you want to aspire to enable your customer to be?

Bilal: I'd say for me...It's seeing what Amazon and Uber do. I mean, it's two brands, not one, in different contexts. I mean, Uber is able to, really well, be able to personalize down to the individual kind of moment of action. And a second, personalize the price, personalize the recommendation, personalize the offer, or recommend a specific routes or specific journeys for you. With Uber Eats, they're able to recommend specific products they might be interested in, personalized the menu, personalize the category, and I think they are able to do that really well. I think that's really the emblematic journey that anyone can do. I think the problem with that is, they have probably some of the most data engineers or data scientists of any company possible. There's a limited supply of data engineers and data scientists, just like there is little supply of drivers, and they kind of have the market monopoly on all the resources. So I think it's very hard for companies to replicate being Uber.

Another good example is probably Stitch Fix. Outside of Uber, they probably have the highest percentage of data scientists or data engineers I have seen the company. And they will also personalize different aspects of the marketing marketing journey as well as within their product. I think the admirable thing about them is that, especially Stitch Fix wasn't a company that you traditionally think of as hiring or focusing on predictive first. But they did it from a very early stage, and I think that was pretty admirable in their part.

And I think now — in this ecosystem that there is still a limited supply of data engineers and data scientists — having a prediction ready or prediction-first company is extremely important. But you don't have access to those technologies, or those resources often. So how do you compete with those types of companies and eCommerce B2C, without having to deploy millions of dollars towards that problem? I think that's where solutions like ClearBrain and mParticle help really well.

Thank you!

Adam: Awesome. Well thank you very much to Bilal and the ClearBrain team for 1) coming into the ecosystem and becoming a great new partner that we're really excited about and 2) for joining us on the webinar here. This is awesome. We are excited to bring our mutual customers and their customers great experiences that are very relevant to those individuals moving forward.

Awesome. Alex, should we wrap up here?

Alex: No, I think this has been great. Thank you all so much for attending and we'll follow up with the recording in the coming days.