How we improved performance and scalability by migrating to Apache Pulsar

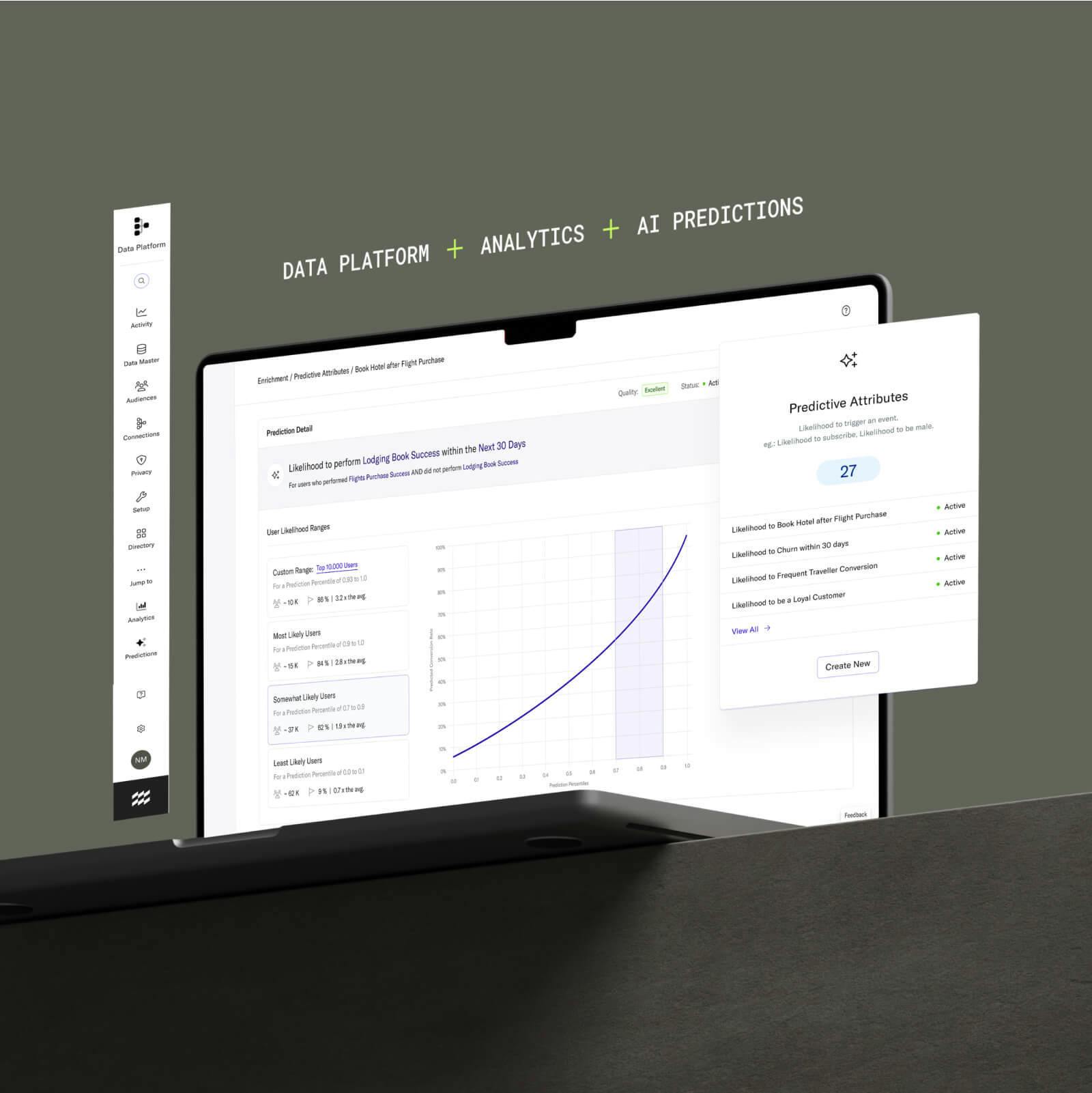

We recently made a significant investment in the scalability and performance of our platform by adopting Apache Pulsar as the streaming engine that powers our core features. Thanks to the time and effort we spent on this project, our mission-critical services now rest on a more flexible, scalable, and reliable data pipeline.

Behind every enterprise software application sits a complex and dynamic service architecture that, by and large, the people using this software don’t care about. Ideally, they shouldn’t have to. End users are typically only concerned with whether the software does what it purports to do. The best software backends behave like the hulking mass of an iceberg that rests underwater––unseen, undetected, yet quietly keeping the visible portion afloat.

Every month, mParticle processes 108 billion API calls, and receives and forwards more than 686 billion events to help companies around the world like CKE Restaurants, Airbnb, and Venmo manage their customer data at scale. Executing this consistently and with razor-thin fault tolerance means that every component within our backend has to be completely optimized for the process that it executes. So back in 2020, when we noticed that our Audiences service was experiencing some data degradation as a result of the message queue that powered it (Amazon SQS at the time), our engineering team knew they had to search for another solution.

The search for a new streaming engine

Within mParticle’s backend, streaming engines are involved in the process of moving data from locations where it is ingested, like client SDKs and a server-to-server feeds, to services that will consume data, such as Audiences. Since mParticle ingests data from multiple sources and delivers this data to multiple services in real time, multiple queues are necessary to handle message delivery operations between clients and services within our architecture.

Amazon SQS and concurrency issues

Prior to adopting Apache Pulsar, Amazon SQS powered data transfers between the components within the mParticle Audience service. While SQS met the needs of most of our use cases at the time (and continues to do so on other services within our architecture), it lacked the ability to serialize incoming messages in a way that would prevent overwrites in the read/modify/write cycles that power Audiences.

Using Amazon SQS, when multiple services (like client SDKs and S2S feeds, for example) would perform read/modify/write tasks against a common database at the same time, it would be possible for them to overlap, resulting in a race condition that looks like this:

- Service A reads, and begins working

- Service B reads, and begins working

- Service A writes to the database

- Service B overwrites service A’s entry to the database

These conditions were not occurring at a very high rate overall. In the case of a widely-used core service like Audiences, however, this concurrency issue could potentially result in an unacceptable number of inaccurate entries over time. This was especially apparent during the development of our Calculated Attributes feature, which allows customers to run a calculation on a particular user or event attribute. When a Calculated Attribute is created, mParticle continuously updates the value for this attribute automatically using raw data streams of user and event data. The demands of this feature would mean the unwanted data overwrites that SQS was causing would not be acceptable.

Streamline your website integrations

Learn which third-party integrations you could replace by adopting mParticle

Vetting new solutions: Apache Kafka vs. Apache Pulsar

To get around this concurrency challenge, our engineers looked for a solution that would allow us to serialize incoming messages in Audiences as well as other services, and ensure that only one read/modify/write task could run at any given time. Our team explored a host of data streaming services that delivered this capability, and identified two frontrunners, both from Apache: Kafka, and Pulsar.

These solutions stood out from the pack because both allow users to supply a key on which incoming data streams are to be separated. By using mParticle ID (MPID) as this identifier, we can ensure that data streams from the same user are processed sequentially rather than concurrently. This would prevent the errors and data consistency issues we were experiencing when processing simultaneous requests from the same user using SQS.

In the diagram below, we see how this ability to partition batches based on an identifier circumvents the race conditions in read/modify/write tasks that we were previously experiencing:

The gray box on the left-hand side of the diagram represents our upstream services. These are the transformation processes that need to occur before user and event data can be useful in a service like Audiences. These processes include data quality assurance, profile enrichment, identity resolution, and others. Without unified and accurate data, building and forwarding audiences to downstream services would not be possible, which is why these upstream services are critical to the value that customers realize from mParticle.

From these upstream services, the data is handed off to the streaming engine, where it is consumed and partitioned by MPID. Separate data streams are also assigned a level of priority to ensure that event streams are given precedence over bulk data. These partitioned batches are then handed off to the audience service, where end users can leverage the data to create target audiences and generate calculated attributes.

Comparing consumer group scaling capabilities of Pulsar and Kafka

We tested both Pulsar and Kafka on a trial basis in a development environment. While we evaluated both solutions on several parameters, the most critical metric was their ability to scale up and down with increases and decreases in the number of consumers. This is because the load demand on the Audiences service (as well as other services that we might potentially migrate to Pulsar in the future) is highly elastic. For instance, when a customer releases a new feature or launches a new campaign, they may create and forward many more audiences in a shorter period of time than they usually do. That’s why it was critical that the streaming provider we chose was able to match server resources to spike and drops in data usage, both to ensure performance stability and avoid unnecessary costs.

Whenever a new instance of the Audience service comes online, this instance needs to connect to the message bus and register and receive the messages, whether that is from Kafka or Pulsar. Once we began testing both services, our engineers noticed that when new instances would connect or disconnect to Kafka, it had to go through a recalculation process to determine which consumers received what data. This process could take up to a few minutes, and during this time data transfer to all other nodes would be blocked.

Pulsar, on the other hand, comes with an auto-scaling feature that minimizes downtime and data loss when new nodes come on and offline. While we did see lag times with Pulsar when new consumers were added or dropped, this period was only a few seconds as opposed to a few minutes. Also, during this latency period, Pulsar was able to keep messages flowing to previously-connected nodes without failure. With the rate that we scale nodes up and down within our Audience services, it was ultimately Pulsar’s dynamic consumer scaling capability that made our engineering team decide to adopt it as the new streaming engine behind Audiences.

A return on our investment: Platform stability and new feature enablement

The full process of adopting Pulsar—from recognizing that we needed a new solution to power certain services to deploying fully in production––took approximately 18 months. It has now been nine months since fully cutting over to Pulsar as the message queue behind our Audience service, and in that time we have realized significant benefits. These include:

- Improved stability and accuracy: Pulsar allows us to deliver more consistent and accurate data to a critical service within our product.

- The development of a key platform feature: Without the confidence that the data flowing into the Audience service was at this level of accuracy, rolling out Calculated Attributes would not have been possible.

We make these investments so our customers don’t have to. By putting this time and effort into our own data infrastructure, we’re ensuring that our customers realize maximum value from all of our services.

Senior Vice President of Engineering, mParticle

While Pulsar is now handling all data flows into the Audience service, we still run SQS alongside this new solution as a failsafe. “We still have a notion of a circuit breaker built into our message processing,” says Tim Corbett, a software engineer who was instrumental in the migration process. “If any problem with Pulsar is detected, we will automatically switch back to SQS so as to prevent any problems before they occur. Instead of 100% data loss or downtime, we seamlessly fall over to the SQS path while we work on the Pulsar path.”

Perhaps the best indication of Pulsar’s success, however, is that it hasn’t drawn attention to itself. “For the most part, people have forgotten it’s running, and that’s good,” Corbett says. “The less you have to think about a critical piece of your infrastructure, the better.”