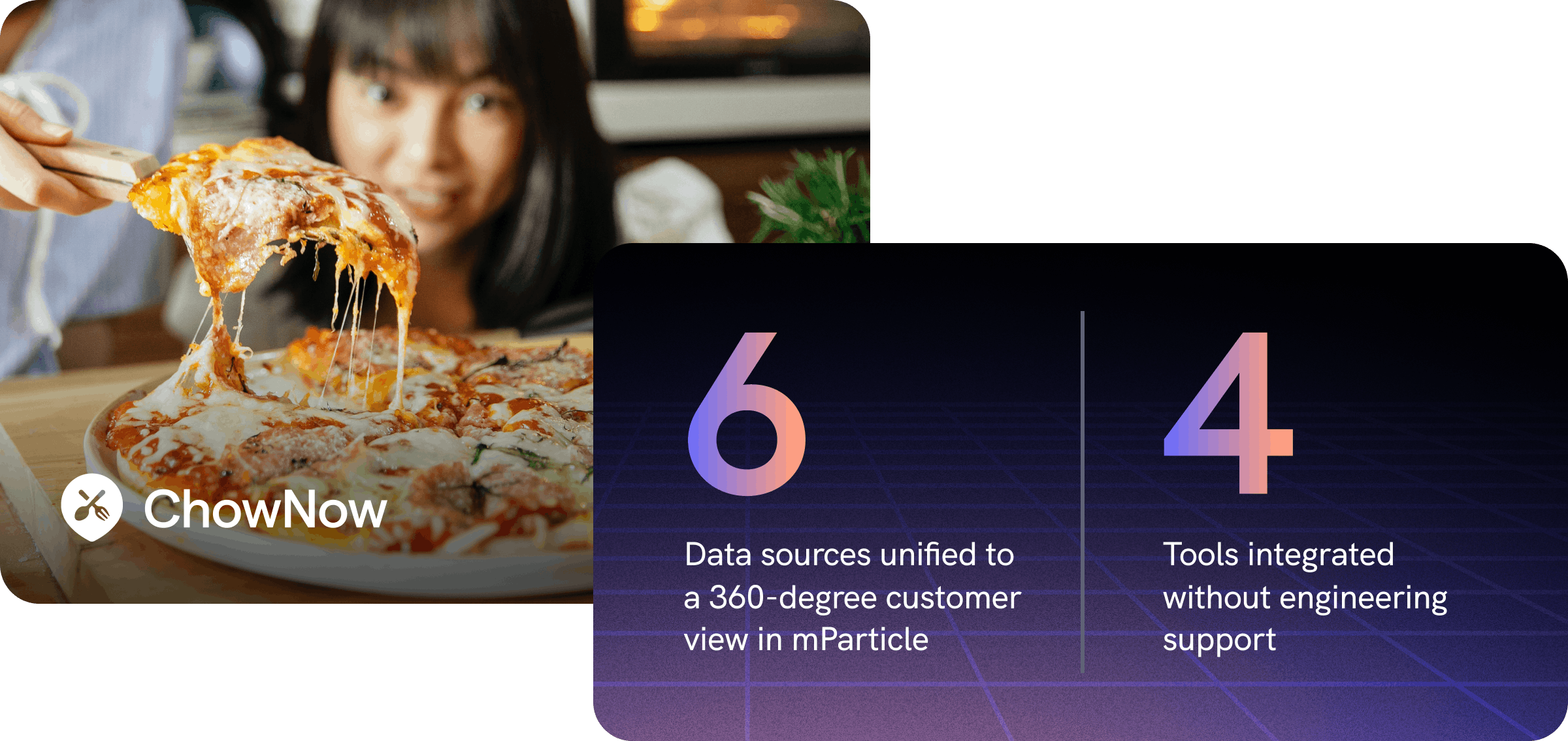

How ChowNow improved data quality throughout their MarTech stack

Using mParticle Data Master, ChowNow have been able to establish a centralized data plan and power tools across their MarTech stack with high-quality customer data, resulting in better experimentation and personalization.

Online ordering and food delivery options have exploded in recent years due to increased digital adoption and the advancement of ordering platforms, among other reasons. The developments that have made it easier for consumers to order delivery, however, have also made it more difficult for independently-owned restaurants to survive. Popular ordering platforms charge significant fees to restaurants every time an order is placed. With independent restaurants facing tight margins to begin with, order fees are making it infeasible for mom-and-pop businesses to access their customers through digital channels, and therefore impossible for them to participate in the modern dining economy.

ChowNow was launched in 2011 to help local restaurants navigate the online ordering landscape. Offering white-label ordering solutions, data-driven marketing tools and services, and their own commission-free online ordering platform, ChowNow helps mom-and-pop restaurants grow their takeout and delivery businesses without being subject to high commission fees.

One of the key traits that helps ChowNow thrive in the competitive delivery landscape is their data strategy. ChowNow’s centralized Data & Analytics team leverages customer insights to improve their own ordering product, deliver relevant communications to consumers, and help restaurants provide better experiences to diners. As the number of sources from which they were collecting customer data increased, however, the DNA team found that their data set was becoming increasingly duplicative, inaccurate, and siloed. This made it difficult for product and marketing teams to use this information to drive business initiatives, and increased the amount of engineering work required to support day-to-day data use cases.

Jordan Plecque, Manager on the DNA team, recognized that there was an opportunity to implement a Customer Data Platform (CDP) that would improve customer data quality while also making it easier for product and marketing teams to access the data they required.

Due to the multifaceted nature of ChowNow’s business, there were several specific needs Jordan wanted to meet.

- Cross-platform data collection

Diners place orders with ChowNow’s iOS, Android, and website delivery platforms. It was therefore important to have a single data collection point for all platforms, to be able to understand cross-platform engagement, and to be able to access a complete customer data set in their downstream tools regardless of which platform it came from originally. - Improved efficiency

With numerous teams requiring customer data to support their business initiatives, it was important for the solution to support various teams’ needs without requiring engineering to perform numerous use-case specific implementations. - Improved data quality

There’s no point in working to distribute customer data across the organization if data is duplicative, inaccurate, and untrustworthy. Jordan wanted to implement a Customer Data Platform with centralized data governance and quality controls so that it would be easier to establish a methodical data planning process, improve engineering efficiency, and block bad data from entering downstream marketing and product analytics systems.

Collect and send customer data anywhere with one implementation

After a thorough examination of the CDP market, the team chose to implement mParticle as their Customer Data Platform to improve data quality, increase access to customer data for marketing and product teams, and increase data engineering efficiency.

ChowNow implemented mParticle on their iOS and Android apps, and websites, for both their Marketplace product and their Direct product. After implementing a single SDK on each platform, the team began collecting customer data from across platforms into ChowNow’s mParticle workspaces in real time. As data is collected, it is resolved to holistic customer profiles to create a 360-degree customer view. Next, the ChowNow team is able to connect any customer data in mParticle to their preferred marketing and analytics tools, as well as to Amazon S3, using mParticle’s server-side integrations. As business and market conditions change, the team can easily connect new integrations or deactivate existing ones, and can also filter which specific events and attributes are being forwarded to each destination, all without any engineering work.

Improving data quality with Data Master

As all of ChowNow’s customer data was now flowing through a single pipeline, it became critical to ensure that the customer data in mParticle was as high-quality as possible. Garbage into their CDP would mean garbage throughout their entire tech stack. Jordan and his team leverage mParticle’s Data Master toolset to manage data quality within mParticle and block bad data from being sent to downstream tools.

The first (and arguably most important) step to managing data quality is data planning. Before data planning with mParticle, the DNA team was working with an expansive, internally-designed google sheet. The sheet had become packed with hundreds of comments from different stakeholders, and had become hard to navigate. Furthermore, there was no way for the ChowNow development team to pull that plan into their IDE, making the implementation of new events a tedious process.

With mParticle in place, the team began using mParticle’s purpose-built data planning spreadsheet template to document their plan. The mParticle template makes it easy for Jordan’s team to maintain an organized data plan, and for marketing and product stakeholders to understand what data is being tracked and request new event implementations. Whenever updates are made to the plan, the team uses a pre-built workflow to export the plan as a JSON file and upload it to their mParticle workspace.

With their data plan uploaded to mParticle, events collected into mParticle from numerous platforms are validated against the plan to ensure data quality and surface any plan violations. And with the ability to export the plan from mParticle straight into their preferred programming environment, developers are able to implement new events more efficiently and with lower risk of error.

The data plan is far-and-away my favorite feature in mParticle. It helps us vet and validate data in a centralized tool. Because as long as we know that the data in mParticle is accurate, complete, and consistent, then it’s easy to send that data downstream using mParticle’s integrations.

Manager of Data & Analytics, ChowNow

With data quality errors addressed at the point of collection in a centralized system, Jordan and his team can guarantee that the data being forwarded to different downstream tools is accurate, consistent, and trustworthy. And because their data plan is owned by the DNA team, they can ensure that the structure and naming conventions of marketing and product data are consistent with data being collected by other business units.

Better data activation

With high-quality customer data available across the tech stack, ChowNow’s marketing and product teams have been able to use data more effectively.

Before implementing mParticle, every tool marketing and product used contained a unique, limited data set. Without a centralized place in which to manage data quality, the data across different tools was inconsistent and often untrustworthy.

With mParticle in place, the team is able to power every tool in their stack with the same, high-quality data set, keeping data taxonomy consistent across apps. This enables the marketing and product departments to understand how data is activated in various tools, use customer data with greater confidence, and has helped foster a data culture across the organization. As Jordan describes, “it is much more common today with mParticle in place for the product team to be referencing specific data in their prioritization of given features and opportunity sizing.”

And because mParticle collects, manages, and forwards customer data in real time, ChowNow has been able to execute real-time use cases, such as trigger-based messaging. “The real-time component would have required two or three additional tools to build internally within our Snowflake data warehouse,” explains Jordan. As the marketing team continues to develop their programs and set up new customer experiences, it’s really easy for them to review the mParticle data plan, understand what data is being tracked, and request the implementation of new events.

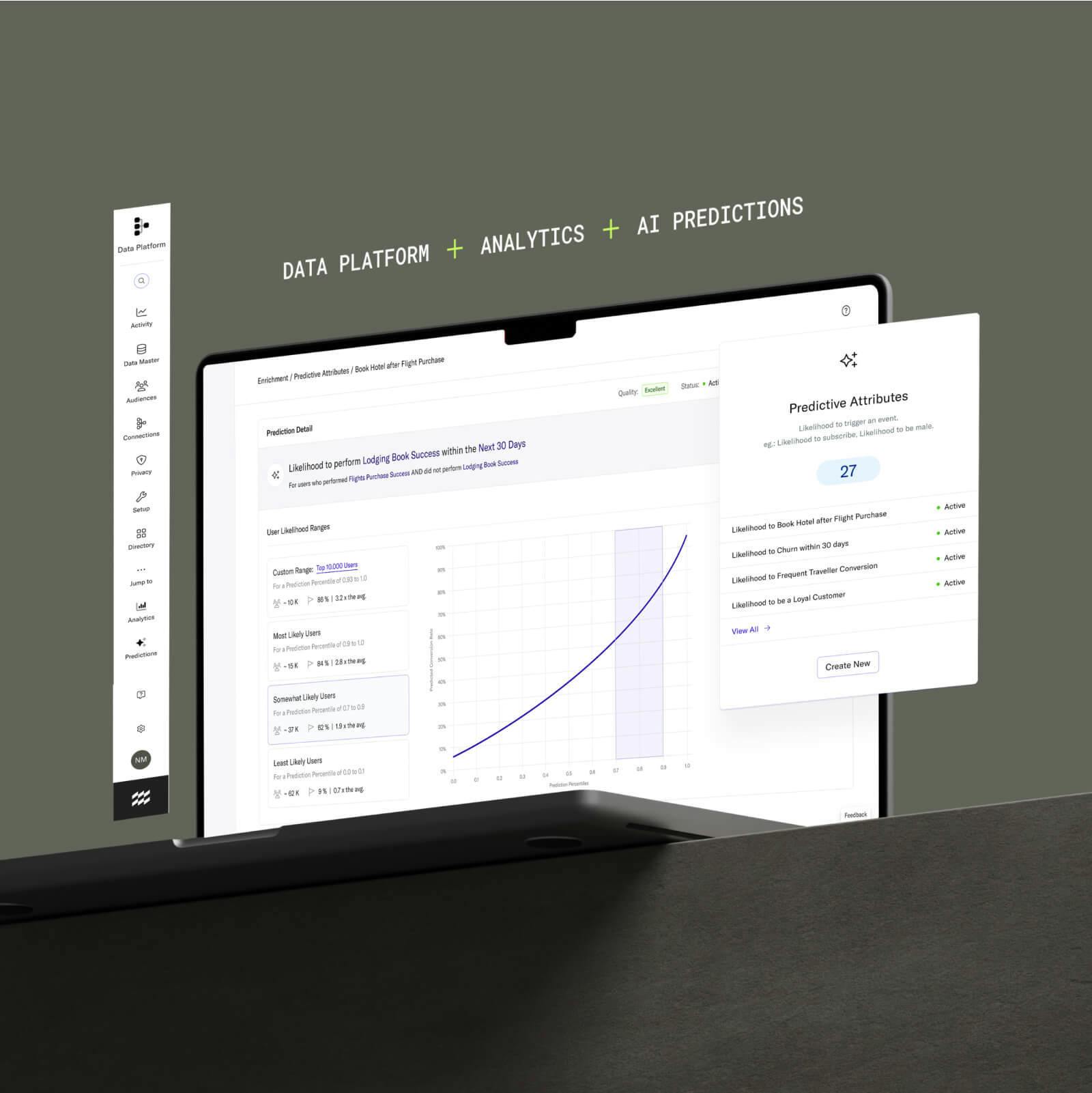

In addition to sending data from mParticle to downstream tools, Jordan and his team also use Feed integrations and custom events to forward data from SaaS tools to mParticle, where it is unified with user data captured by the respective mParticle SDKs. By using LaunchDarkly and mParticle, for example, the team collects feature flag assignment events into mParticle, where it is unified with product engagement events in real time. When this data is then forwarded to analytics tools for reporting, the product team is able to evaluate experiments and make better decisions.

We now have the ability to confidently measure the results of new features when we run an experiment. When we run those experiments, mParticle is crucial in that because mParticle ingests the LaunchDarkly assignment event data and tracks all the down-funnel events. Without that, we wouldn’t be able to evaluate our experiments

Manager of Data & Analytics, ChowNow

mParticle has helped transform the way in which teams across ChowNow use and think about customer data. With the ability to manage data quality within a single system, and to connect high-quality customer data to marketing, analytics, and measurement tools without use-case-specific development work, the teams are able to use data with more confidence and solve business challenges faster.

As he looks towards the future, Jordan is excited to dive deeper into data quality management with Data Master. By defining a list of expected values for any given event, for example, the team will be able to begin performing value-based validations. And by surfacing flagged violations to Product/Engineering, they will be able to fix implementation errors more quickly.