A single customer view is not the goal (it's the result)

Companies have long tried to establish a single customer view, but few have been able to put a solution into place that addresses the cross-functional needs of stakeholders. The problem is that a single customer view is often seen as the goal of processes, rather than the result. Learn how to create a single view of the customer by enforcing an organization-wide commitment to data quality and collaboration catalyzed by a sound data design process.

TL;DR

While technology commoditizes and code changes, data persists. Having the right data design in place ensures greater utility of existing tools and is mandatory to establish the Single Customer View, the foundation for scalable Machine Learning.

The main challenges for teams around customer data are two-fold:

- Different teams with different needs use customer data across the entire product lifecycle, but customer data is rarely managed holistically across these phases. Consequently, data management and accessibility are siloed, both organizationally and technically. The result is an incomplete view of the customer; or put another way, the parts’ sum is less than the whole.

- Data quality is either improving or degrading (default state), with compounding effects across product cycles and product portfolios. Without continuous and coordinated investment in data quality management, data quality inevitably degrades.

Teams must address these issues if they want to leverage their customer data to improve customer engagement and optimize business outcomes at scale. Data requirements across the business continually evolve in tandem with the product development lifecycle and external factors (like privacy regulation) and as platform policies change. The foundation for durable success is a unified customer data pipeline that contemplates the collective customer data needs across the business, and that foundation begins with intelligent data design.

Customer data challenges throughout the product lifecycle

More customer data is created and consumed than ever before, and almost every company is struggling to wrangle all of this data across several applications, systems, and endpoints. However, maximizing customer data’s value to drive multi-channel personalization and engagement, along with the underlying measurement capabilities, requires more than just access to a set of tools. Optimal utilization begins with the right data strategy and requires collaboration across data consumers within an organization.

While it is common knowledge that a brand’s customer data is one of their most important assets, organizations continue to struggle to properly manage customer data despite considerable investments in open systems, SaaS platforms, and internal engineering capabilities. At first pass, there may not seem to be an obvious technical explanation as to why customer data remains siloed, why its quality is unpredictable, and why it is difficult to orchestrate across an organization. Even teams that have undertaken a data planning exercise, created mappings, and taxonomies, etc. still find themselves struggling to leverage their customer data properly. It is not uncommon for teams to be unable to pinpoint the source of their data challenges—which frequently ends up being how customer data is created, managed, and utilized throughout the entire product lifecycle.

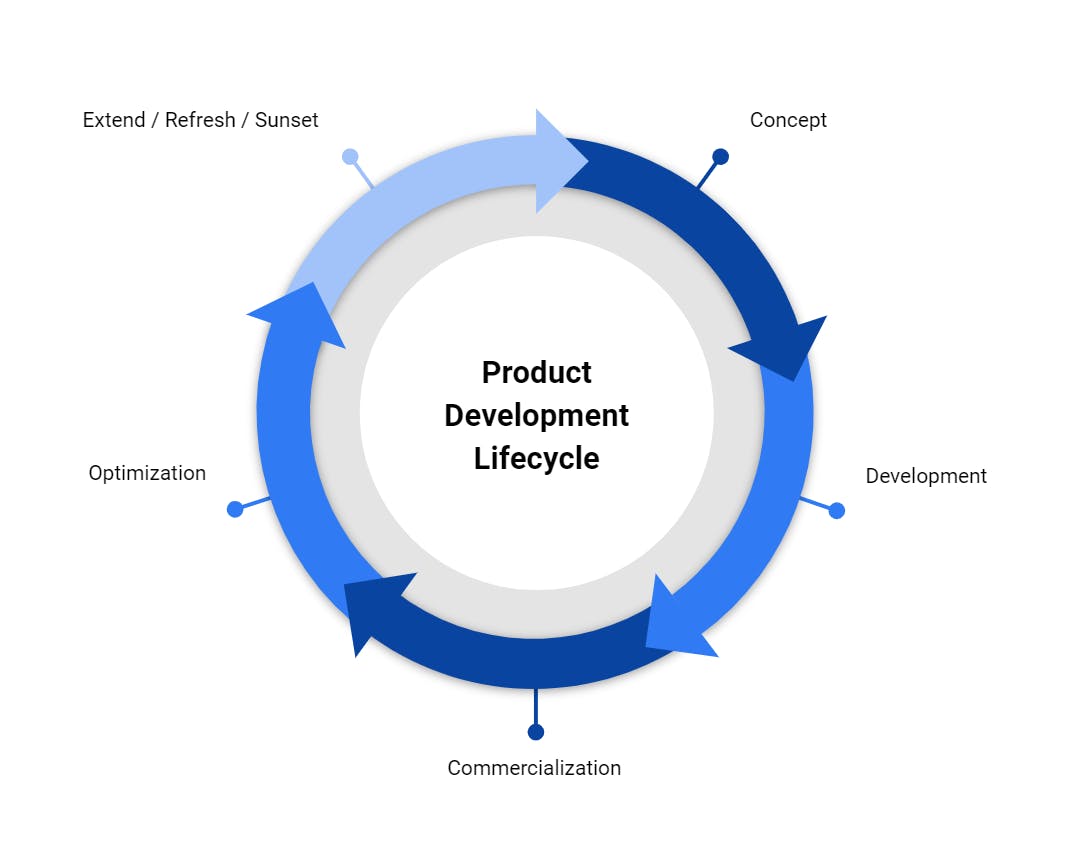

The following stage diagram generally represents the product lifecycle for digital offerings:

Customer data is created and used within and across all stages of the product lifecycle. Different teams have different needs and leverage various tools, which consume a relevant subset of customer data to function. While product stages are theoretically tightly coordinated, in practice, they are executed by different teams with their own priorities and internal KPIs within any specific stage; Engineering may focus on software quality and release deadlines, whereas Marketing will own customer acquisition and retention. Customer data needs and requirements are subjugated to these goals. In effect, there is no holistic customer data management framework at play to any significant extent.

The greatest gaps in customer data requirements occur between the Development and Commercialization phases. Despite their best efforts to coordinate, teams often find themselves at odds as each team prioritizes their objectives with little incentive to consider other teams’ needs. Primarily, Product teams generally feed customer data into product analytics tools, like Amplitude or Mixpanel. In contrast, Commercialization teams must operationalize significant amounts of data generated from the various customer engagement channels (paid, owned, and earned) across various partners from Facebook and Google, The Trade Desk, to Braze and Iterable, and usually dozens more.

Unfortunately, the white space between these phases creates many opportunities for data quality issues. While data quality issues can happen at any phase, they are more pronounced during the Optimization phase. At this point, data success becomes a team sport, and everyone must collectively turn their focus to retention and lifetime value maximization efforts. Impairment via bad data during the Optimization phase jeopardizes long-term success and has compounding impacts on future product cycles.

As a consequence, the following negative symptoms are commonly observed:

- Application customer data is managed separately from marketing customer data.

- Customer data management is coordinated in a loose (often ad hoc) fashion.

- Frequent instrumentation and quality efforts are required for customer engagement and CX needs.

- Data quality degrades across release cycles.

- Data quality fixes cannot be applied retroactively.

- Leveraging data across product portfolios is problematic.

- Disjointed and inconsistent customer experiences across various engagement channels.

- Even if anticipated, regulatory or governance changes serve as a force multiplier and are highly disruptive to productivity.

As a product matures, customer data utilization begins to span a complex environment of cross-functional workflows and internal and external platforms, reinforcing the compounding effects of data quality in either a positive or negative direction.

Data design overview

Much like the product design process, where teams map out all the ways products will be consumed and pinpoint improvements to optimize the customer experience, the same governing principles can support the business' data collection and consumption needs. Investing in data design increases customer data's effectiveness across the entire product lifecycle for the individual teams and the organization as a whole. After all, as technologies commoditize and code changes, data persists. Due to this, there is a significant premium attached to intelligent data design.

A cross-functional data design exercise provides four critical functions:

- Identify cross-stage data dependencies

- Create and enforce data quality standards

- Address governance and privacy requirements

- Establish cross-functional processes on customer data before major initiatives start

Investing in data design as a precursor to Customer Data Infrastructure will enable teams to implement a truly holistic management framework for customer data across the entire product lifecycle. In our experience, having such a framework is key to creating a “data flywheel” in which data is continually increasing in both quality and value from creating positive business impacts.

Data design is a critical path to any customer engagement strategy by ensuring that customer data is structured correctly for consumption needs across the entire product lifecycle. Most importantly, teams should experience a customer data flywheel, where the data quality and data’s impact on the product cycle should improve over time.

When addressing data quality, we are specifically focused on the informative ability of the data. As previously mentioned, data quality has a compounding effect over time. Poor data quality increasingly degrades performance, while high-quality data improves performance over time. Data quality rarely remains static; in fact, we have never seen a "set it and forget it" strategy successfully executed over any meaningful timespan. If your data quality is not improving, it is degrading.

The data design process is a cross-functional exercise that addresses these critical factors:

- The interdependence of data decisions across product phases

- Best-of-breed tool deployment

- Data quality and standards

- Privacy and regulatory requirements

- Governance

- Cross-product/portfolio data usage

- Customer experience optimization

- Ecosystem integration

By aligning the data design process to its product life cycle, mParticle creates a holistic and actionable strategy.

Data design process

A successful data design process serves as the foundation for your customer data infrastructure, enabling the implementation of a platform like mParticle. The goal is to develop a detailed and future-proof data plan that addresses all stakeholders’ needs individually and collectively. The process should manage both data that lives in existing systems and data captured as close to the source as possible. In doing so, the net result is a single customer view that can be leveraged across any system or tool, by any team, at any phase of the product lifecycle.

A data design process will address five key considerations for organizations attempting to create a single customer view by ensuring that the organization’s collective needs are met. We will dive into additional detail on how we guide our customers through a subsequent post’s data design process, but highlighting the most important steps will set the stage.

- Systems mapping

- Naming standards and conventions

- Identity strategies

- Analytics needs

- Personalization and engagement requirements

- Governance and enforcement model

- Privacy regimes

Customer data lifecycle

The following sections outline how customer data flows through the product lifecycle. We look at the common tools, processes, and data assets involved at each stage of the cycle and highlight dependencies that occur across stages.

Concept phase

The focus of the concept phase of the product development lifecycle is the optimal product selection/prioritization. During this process, teams will review the available data they have—existing customer data, data from research, or purchased data—to understand their “best” product opportunities.

Generally, the more comprehensive, accurate, and recent data assets are, the better insights brands can derive from them. An important concept here: data from marketing, customer support, social channels, etc. are critical to understanding potential CAC, churn, LTV outcomes for new products. Data quality and the ability to unify data sets (application + marketing + engagement) significantly impact the quality of new products' analysis.

The main deliverables of this process are product prioritization with associated business plans.

The functional teams managing this process are Product Management and/or Marketing with support from Data Science teams.

Data orchestration features assist in the ‘concept’ phase in addition to the data quality and unification features. These allow brands to aggregate and enhance data and instantiate the various tools needed for performance research.

| Data consideration | Description |

|---|---|

| Processes | - Business model planning forecasting - Customer research - Data analysis - Surveys - R&D - Prototyping |

| Assets | - Comprehensive customer data history - Syndicated data enrichment - Research results/deliverables |

| Tools | - Enterprise data warehouse/data lake - BI systems - ML toolsets - Third-party research platforms |

Development phase

The product development phase is focused on delivering a commercially viable product. There is a heavy emphasis on functional design and customer requirements. Unless specific data design processes are implemented, it is during this phase when several customer data challenges are introduced:

- Data needed for commercialization/optimization activities are often ignored, which results in the need for significant post-commercialization instrumentation.

- A lack of data standards required to address the full product lifecycle results in data hygiene issues and poor quality data in downstream systems.

- Machine learning processes for optimization rely heavily on the decisions made during development. Lack of data quality/unification considerations creates heavy processing workloads that slow ML processes.

- Lack of governance and regulatory requirements for commercialization and especially extensions (cross-promotions, international expansion) hinder future product cycles.

| Data consideration | Description |

|---|---|

| Processes | - Product design re: customer data - Customer journey design - Customer accounts - Security - Personalization - Recommendation - Organic promotion (social) - Third-party vendor integration - Data standard definition/application - Product KPI definition - MVP iterations |

| Assets | - Data plan - MVP feedback (limited customer data) - Customer research results |

| Tools | - Application databases (DBs) - Vendor API/SDKs - Application Performance Management (APM) - Crash reporting |

Commercialization phase

The product is officially brought to market during the commercialization phase, also referred to as the launch phase. The primary goal is to gain traction within the marketplace from a customer adoption standpoint. Various models are tested to prove or disprove various commercial hypotheses. For more mature organizations, financial goals are set as KPI’s.

This phase is usually lead by Product Management and Marketing. Engineering has an intense fit and finish process at the start of commercialization. Legal and compliance teams will have specific needs for mature organizations and in regulated industries such as fintech and healthcare.

The transition from development to commercialization can be challenging organizationally as marketing processes and the marketing technology ecosystem become engaged—customer data and market feedback comes quickly and in high volume. Time pressure may begin to mount as competitors will begin fast following soon as market validation begins. At the same time, product analytics gain clarity as the scale of usage begins.

From a customer data standpoint, if there was little consideration to commercialization needs during product development, there may be an intense scramble to instrument numerous marketing tools or invest in customer data infrastructure. This rapid onboarding of marketing tools and/or data infrastructure usually happens at the same time that product analytics are beginning to provide clarity on usage and engagement. There is a high risk of siloed, non-aligned data sets being created during this period.

| Data consideration | Description |

|---|---|

| Processes | - Customer engagement analysis - Engagement activities - Paid acquisition - Social/viral promotion - CRM - Affiliate - Cross-promotion - KPI monitoring - Product fit-and-finish - Monetizatino |

| Assets | - Customer profile data - Product usage data - Transaction data - Marketing attribution data - Customer feedback - Audience data - Marketplace data |

| Tools | - Product analytics - Marketing automation - Marketing attribution - Paid advertising (DSPs and Walled Gardens) - Customer support - Enterprise data warehouse |

Optimization phase

Depending on the product's rate of maturation (or organizations with existing product portfolios), the optimization phase may begin within weeks after commercialization. With sufficient data volumes and established KPI performance, brands begin to optimize both CX and commercial performance. Often these two optimization efforts are conducted in separate, loosely coordinated efforts.

For consumer brands, commercial performance is largely determined by customer acquisition cost (CAC), churn, lifetime value (LTV), and average revenue per user (ARPU) is also a commonly used metric. With the support of Data Engineering, marketing and product teams will begin experimentation/optimization using marketing attribution, transaction data, customer feedback, and user engagement data.

Product and Engineering will focus on customer engagement via on-property personalization. This process will leverage product analytics, customer data, and any data enrichment opportunities that are available. Data engineering will assist in these efforts. Data unification and enrichment from the various commercialization systems will occur during this phase, mostly based on customer identity.

It is during the optimization phase that data quality and standards have a significant impact. Lack of planning during the development phase for optimization needs will impair or may prevent some initiatives. On the other hand, if data design has been successful, all teams' transition to optimization will be marked by all teams with access to customer data in an optimal state across all systems. Advanced analysis such as fraud detection, anomaly detection, and predictive customer scoring processes also start during this phase, led by data engineering.

| Data consideration | Description |

|---|---|

| Processes | - Customer experience (CX) personalization - Marketing optimization - Channel or partner optimization - Ad spend optimization - CAC/Churn/LTV analysis - Data unification - Fraud/verification |

| Assets | - Enhanced customer data - Established KPIs - Attribution data |

| Tools | - Largely the same as commercialization |

Extension phase

After optimization, Product and Marketing teams begin to plan for the product's future. For successful products, product extensions and refreshes are typical considerations. For companies with product portfolios, cross-promotional offerings and bundling opportunities may be considered. Once sufficient progress is made on the extension phase, the product enters the concept phase along with other product initiatives for analysis/selection.

This phase focuses on experimentation and analysis, where on-demand access to high-quality customer data is highly valuable in this phase. As with the concept phase, the ability to have high-quality, unified data sets is paramount to forming accurate insights. This phase highlights the compounding effects of data design—strong data design previous in the product lifecycle strengthens the next product cycle.

| Data consideration | Description |

|---|---|

| Processes | - Customer data mining - Customer research - Cross-selling/promotion experiments - Data unification |

| Assets | - Historical customer and marketing data assets |

| Tools | - ML tools - BI platforms - CRM - EDW |

Value alignment

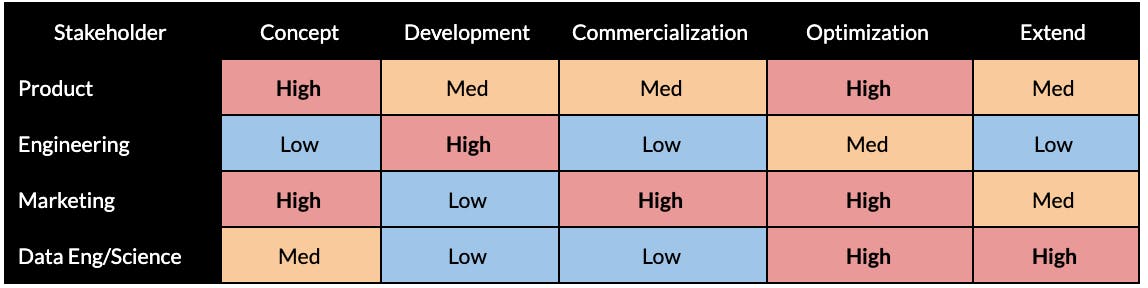

We have long championed the concept of data as a team sport. Given the complexities and requirements for the various personas who either provide access to or consume customer data across an organization, it helps break out the functional needs across these personas along the entire life cycle. For example, this table shows the typical focus of buyers by function by stage:

The core value to each of the functional teams, which varies according to the particular product stage they are in:

Engineering

- Lean tagging approach will ensure minimum overhead and maintenance required

- Full flexibility to continue to evolve, freedom to built what they care about

- Security and the ability to flexibly adjust to evolving governance requirements.

Product managers

- Enable product extensions and extend into new product cycles seamlessly

- Protect valuable engineering resources and roadmap execution

- Establish data-driven product development with high-quality data.

- Deliver exceptional customer experience via personalization and reduced technical overhead

Marketers

- Create a 360-degree customer view

- Execute advanced, multi-channel personalization strategies

- On-demand access to an ecosystem of tools

- Better attribution and understanding of key channels

Data engineering/Data science

- Improve data quality for machine learning models

- Data unification via advanced identity resolution

- Orchestration to apply insights in real time

Conclusion

Companies have long tried to establish a single customer view, and there are many opinions on how best to do this, depending on the vendors you ask. Several approaches may make sense for a certain function, such as marketing, but do not represent a complete solution.

The best path to actualize a true single customer view for the business is via implementing a unified customer data pipeline that addresses the cross-functional needs of stakeholders across the organization. The implication here is that the single view of the customer is the result, not the goal. A single view of the customer results from an organization-wide commitment to data quality and collaboration catalyzed by a sound data design process. It materializes via investment in Customer Data Infrastructure, such as mParticle.